Sub-4-bit LLM quantization compresses large language model weights to fewer than 4 bits per parameter, reducing memory requirements enough to enable the deployment on laptops, phones, and browsers. However, aggressive compression introduces reconstruction error, calibration complexity, and accuracy tradeoffs. That’s why sub-4-bit compression requires non-uniform bit allocation, assigning more bits to high-importance weights and fewer to less critical ones, to avoid significant accuracy loss. This guide examines the major sub-4-bit techniques, their architectural implications, and how to evaluate them for enterprise deployment.

- Why LLM Quantization?

- LLM Quantization Challenge

- Why Traditional Quantization Methods Fail at Sub-4-bit

- Advanced Quantization Approaches

- LLM Inference Challenge for X-bit Quantization

- The Path Forward

- LLM Quantization Method Comparison: Suitcase Analogy

Why LLM Quantization?

The significant memory and computational demands of large language models (LLMs) create barriers to deployment. LLM quantization addresses this by compressing model weights from 32-bit or 16-bit floating-point numbers to lower-precision formats, making on-device LLM inference possible.

Sub-4-bit quantization matters for three primary reasons:

- GPU Memory Reduction – Lower bit widths reduce model size by 4x–16x compared to FP16.

- Inference Efficiency – Smaller weights reduce memory bandwidth pressure and can improve latency on memory-bound workloads.

- Deployment Flexibility – Large models become deployable on smaller GPUs or edge hardware.

However, these gains come at a cost if not implemented correctly.

LLM Quantization Challenge

Quantization works by mapping weights from a high-precision format to a smaller set of representable values. For LLMs, this means converting 32-bit or 16-bit floating-point weights into compact integer representations — fewer bits means less memory and faster computation through SIMD (Single Instruction Multiple Data) instructions that process multiple operations per clock cycle. Compressing the full dynamic range of floating-point weights into a small set of integer values inevitably introduces information loss.

To put things in perspective, an 8-bit integer can represent only 256 (28) distinct values compared to 4.3 billion (232) in a 32-bit float. Information loss grows significantly at and below 4 bits, as they have 16 distinct values or fewer.

Why Traditional Quantization Methods Fail at Sub-4-bit

The fundamental issue lies in how neural networks distribute importance across their weights. Not all weights contribute equally to model outputs — a small subset of high-magnitude weights, often called "salient" weights, have disproportionate influence on model performance. With only 16 representable values at 4-bit precision and just 8 at 3-bit, even minor quantization errors in these critical parameters compound across layers, resulting in significant accuracy degradation.

Uniform bit allocation, assigning identical bit depth to every weight regardless of importance, cannot solve this problem. Standard methods treat a high-magnitude salient weight the same as an insignificant one, wasting the limited bit budget on parameters that don't need precision while underserving those that do. The lower the target bit rate, the more severe this misallocation becomes.

Traditional techniques like GPTQ attempt to address this by minimizing quantization error layer by layer, quantizing weights sequentially, and adjusting remaining parameters to compensate for induced errors. While this layerwise approach improves on naive uniform quantization, it still assumes a fixed bit depth per layer and relies on calibration data to estimate input feature statistics. This rigid constraint leaves no room to prioritize salient weights, and calibration data cannot fully capture the outlier distributions that matter most.

Since GPTQ's introduction in 2022, the quantization methods have evolved significantly. Yet, GPTQ is still the most popular and cited article as of February 2026.

GGUF (GGML Universal File) file format, the successor to the GGML format, packages weights and metadata into a single binary with built-in support for quantization levels from Q2 to Q8. Unlike GPTQ's layer-by-layer approach, GGUF uses block-wise quantization, splitting weights into blocks with individual scale factors to handle outliers.

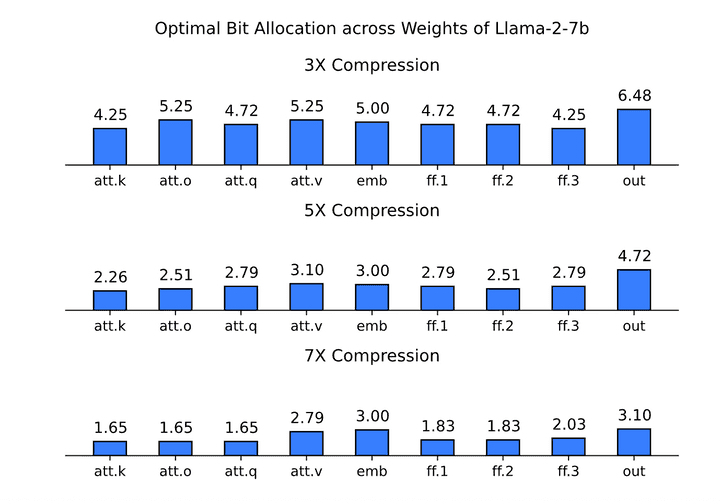

While GPTQ and GGUF are widely adopted, their fixed allocation assumption creates an artificial constraint at sub-4-bit precision. Not all weights contribute equally to model performance, yet treating them identically wastes the limited bit budget available at extreme compression ratios. When compressing Llama-2-7B to sub-4-bit precision, empirical analysis shows that optimal bit distribution varies dramatically across model components, ranging from 1.65 to 6.48 bits depending on the component, and changes non-linearly with the target compression ratio. In other words, 7X compression is not simply a proportional scaling of 3X or 5X.

Advanced Quantization Approaches

Recent methods take different approaches: some reorganize how data is distributed before quantization (rotation-based), while others optimize how bits are allocated across weights (X-bit allocation).

SpinQuant, built on QuaRot, uses rotation-based quantization to eliminate outliers. QuaRot applies Hadamard matrix rotations before quantization to remove problematic outlier values that typically cause quantization errors. SpinQuant improves on this by using learned rotations rather than fixed ones.

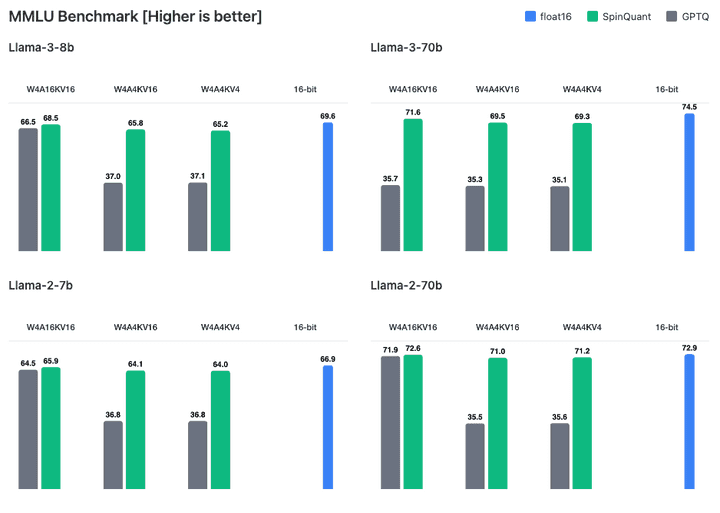

At W4A4KV4 quantization, SpinQuant achieves a 4.4-point accuracy gap compared to full-precision LLaMA-3-8B (65.2 vs 69.6, respectively) on the MMLU Benchmark, while GPTQ at the same precision causes Llama-3-8B to lose almost half of its "intelligence" (37.1).

Float16 shows the original performance of models before any quantization. W4A16KV16 quantizes only the model weights to 4-bit while keeping activations and KV cache at 16-bit, representing traditional 4-bit quantization that most methods can handle. W4A4KV16 additionally quantizes activations to 4-bit. W4A4KV4 goes further by also quantizing the KV cache to 4-bit, achieving full end-to-end 4-bit quantization with maximum memory savings.

Meta has publicly released SpinQuant-quantized Llama 3.2 models and integrated them into production systems like Meta's ExecuTorch and the LLMC compression toolkit.

Picovoice picoLLM goes further by learning optimal bit distribution rather than using predetermined rules. The algorithm learns the optimal bit allocation for a target model size both across model components and within individual weight matrices, minimizing accuracy loss across all layers simultaneously.

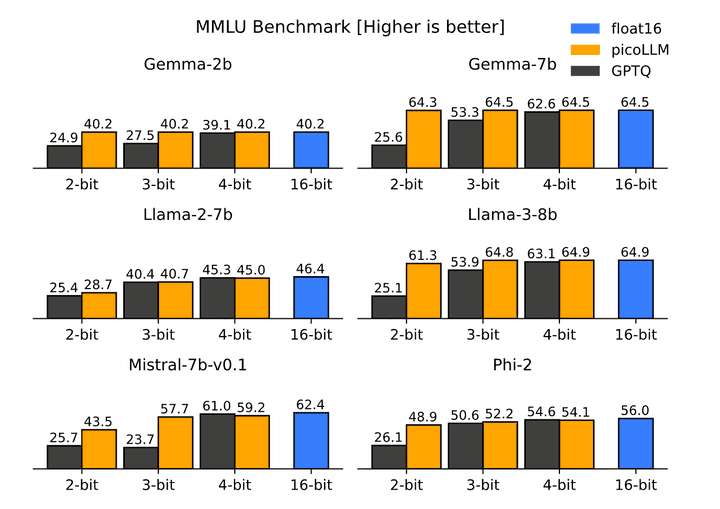

On the MMLU benchmark, GPTQ-quantized models lose more than half of their intelligence at 2-bit and 3-bit precision across all tested models, whereas picoLLM-quantized Gemma-2b maintains near-float16 accuracy even at 2-bit, while at 3-bit and 4-bit quantization, quantized models perform as intelligently as the 16-bit Gemma-2b.

If you’re interested in deep learning, learn how picoLLM Compression deeply quantizes LLMs while minimizing loss by optimally allocating bits across and within weights.

LLM Inference Challenge for X-bit Quantization

Shrinking models while preserving their intelligence is only half the challenge. Existing LLM inference engines expect uniform quantization, a fixed bit depth like 4 or 8-bit. X-bit quantization breaks this assumption by assigning variable bit rates across weights, meaning a model might average 2.56 bits rather than conforming to any standard depth, e.g., 2 bits. This makes X-bit quantized models incompatible with existing inference frameworks, requiring a brand new inference engine.

Building a new inference engine for X-bit quantized models requires implementing SIMD operations for every bit depth from 1 to 8 bits across multiple instruction set architectures. For x86 alone, supporting five SIMD variants with eight bit depths requires 80+ specialized functions. Cross-platform deployment multiplies this complexity further: supporting CPU and GPU across Linux, macOS, Windows, mobile, and web browsers requires separate implementations for CUDA, Metal, WebGPU, and custom threading frameworks, with JavaScript's lack of native threading requiring parallel execution via Web Workers. Runtime detection then selects the appropriate code path based on available hardware.

The Path Forward

Sub-4-bit quantization represents significant progress toward democratizing LLM inference on edge devices. X-bit allocation methods prove that aggressive compression is achievable when bits are distributed optimally rather than uniformly. However, the increasing demand for quantized LLMs raises practical questions for enterprises considering on-device deployment:

Cloud vs. on-device: While Apple and Google are moving toward on-device, enterprises must decide if and when they will make a similar move.

Model Choice: DeepSeek, Llama, Qwen, Phi... As of February 2026, there are over 325,000 text generation models on Hugging Face. While this diversity excites researchers and hobbyists, it often overwhelms enterprises that need clear, reliable paths to production-ready on-device LLMs.

- Quantization Method: GGUF’s strong community, SpinQuant’s backing by Meta, and picoLLM’s cross-platform efficiency… Currently, over 125,000 models on Hugging Face use GGUF alone.

Ultimately, the best choice is the one that best serves end-users, and the most expensive resource is time. As quantization algorithms and inference engines continue to evolve, enterprises aiming to be first movers should evaluate quantization methods, inference engine compatibility, and cross-platform support before committing to a production stack.

Consult an ExpertLLM Quantization Method Comparison: Suitcase Analogy

Let's imagine you're packing a suitcase with compression bags. GPTQ: You pack items one at a time, compress and place them one by one while adjusting the remaining items to compensate for any space issues. You're sequential and careful, but you use the same compression strategy for everything—socks, sweaters, silk shirts, and satin dresses get treated identically.

GGUF: You organize items into pouches and apply different compression levels to each pouch. You have your winter coats in one pouch, t-shirts in another, socks in a third. You apply different levels of compression to each pouch (block).

EXL2: Now you can compress different items even in the same pouch at different levels. You start with a target compression level (5 units). After test-packing a few sample suitcases (calibration), you learn which categories are sensitive and assign what each needs: suits need 7 units, shirts need 5 units, and socks need 2 units, even if they're in the same pouch to hit the target compression level. EXL3 is the next generation—faster and smarter. You use an improved approach (QTIP) and don't need test-packing, so you pack the suitcase in one efficient pass.

Although EXL2 and EXL3 are included here for completeness; they're not included in the main article, as the developers are still working on EXL3 optimization.

QuaRot: You tackle oddly-shaped items, such as a large umbrella, before packing. You place it diagonally at the bottom using fixed 45-degree rotations (Hadamard matrices) to make awkward shapes fit better.

SpinQuant: Same rotating idea as QuaRot, but instead of using fixed angles, you learn the optimal angle for each item. Maybe the umbrella fits best at 37 degrees, and the tripod at 52 degrees. More sophisticated than QuaRot's one-size-fits-all rotation.

picoLLM: You're given the target space (volume of the suitcase) instead of a target compression value. You calculate the exact optimal space allocation for each individual item. Unlike category-based methods, you can assign identical items different values depending on their importance. For example, your child's favorite teddy bear, gifted by your late grandma, gets 6 cubic inches, while the store-bought backup gets only 1/4 of that (1.5 cubic inches). These ratios adapt to your constraints. If you're packing a backpack instead, the store-bought one may get only 1/5 of the gifted one (1.2 cubic inches).