Voice AI Agents are a type of AI agent that is enabled by voice AI to mimic natural human-like interactions. They leverage several technologies to process human speech, answer inquiries, hold conversations, make recommendations, and execute tasks. Voice AI Agents work through a combination of technologies that allow them to process and understand spoken language and then respond appropriately.

Voice AI Agents offer seamless, interactive, and dynamic experiences by combining several AI technologies:

1. Wake Word Detection

Wake Word Detection is used for voice activation. The technology is also called Keyword Spotting as it spots a keyword and triggers an action, in this case, starts listening for voice prompts.

Imagine building an application similar to ChatGPT. One option to activate the application is to click on the app or a microphone icon. The other option is to say its name, i.e., wake word. Once the system hears the wake word, it activates the voice AI agent, signaling that it's ready to listen and respond.

Deciding on a wake word? Don't forget to check our guide on choosing a wake word.

2. Voice Activity Detection

Voice Activity Detection detects the presence of voice input, ensuring that the AI agent listens when humans speak.

Imagine building a customer service AI agent. Voice Activity Detection allows AI Agents to identify when a customer begins and ends speaking.

See Picovoice's lightweight, accurate Cobra Voice Activity Detection in action and test how VAD works.

3. Streaming Speech-to-Text

Streaming Speech-to-Text refers to the process where the spoken language is converted into written text in real time as a speaker is talking. Unlike traditional methods where the speech needs to be recorded entirely or in batches for processing, the ability of continuous transcription of streaming speech-to-text allows immediate transcription.

Once a Voice AI agent is activated, it begins transcribing human speech using streaming speech-to-text. This means that as you speak, the system continuously converts your spoken words (audio) into written text in real time.

Looking for on-device speech-to-text to minimize the latency of voice AI agents? Learn why Cheetah fits better than Whisper Speech-to-Text.

4. Large Language Model

A Large Language Model (LLM) is a type of artificial intelligence (AI) that is trained on vast amounts of text data to understand, generate, and respond to human language in a coherent and contextually relevant way.

After speech-to-text technology converts spoken words into text, the LLM helps the Voice AI Agents understand the meaning behind the text. The LLM interprets what the customer is trying to do, such as report an insurance claim; identifies key details, such as dates; taps into other knowledge bases to answer a wide range of questions, such as current stock prices and generates responses that sound natural and conversational.

In short, LLMs allow Voice AI Agents to understand user input and generate natural, relevant, and context-aware responses. While LLMs are crucial for enabling AI agents, their large size can make them expensive to run and introduce reliability issues due to cloud dependency. Reducing the runtime and storage requirements of LLMs, without compromising accuracy, makes them viable for everyday devices and accelerates the deployment of Voice AI Agents.

picoLLM Compression leverages AI to find the optimal compression strategy to minimize the compute requirements of any LLM while maintaining accuracy.

5. Streaming Text-to-Speech

Text-to-Speech (TTS) converts written text into spoken language. It enables software to audibly respond to humans in a natural-sounding voice, eliminating the need for visual feedback, i.e., text. Text-to-Speech reads the response generated by LLMs to customers as the last step of natural, speech-to-speech interactions between machines and humans.

Streaming Text-to-Speech, unlike traditional Text-to-Speech technologies that wait for the complete text to start processing, reads the text output of LLMs in real time, akin to humans, minimizing the latency of Voice AI Agents.

Orca Streaming Text-to-Speech enables developers to build superfast LLM-powered Voice AI Agents thanks to its on-device and streaming capabilities, as proven by an Open-source Text-to-Speech Latency Benchmark.

Building a Voice AI Agent

Picovoice's modular engines allow developers to build voice AI agents that fit their use case the best. Enterprises can choose to use one or more Picovoice engines when building Voice AI Agents.

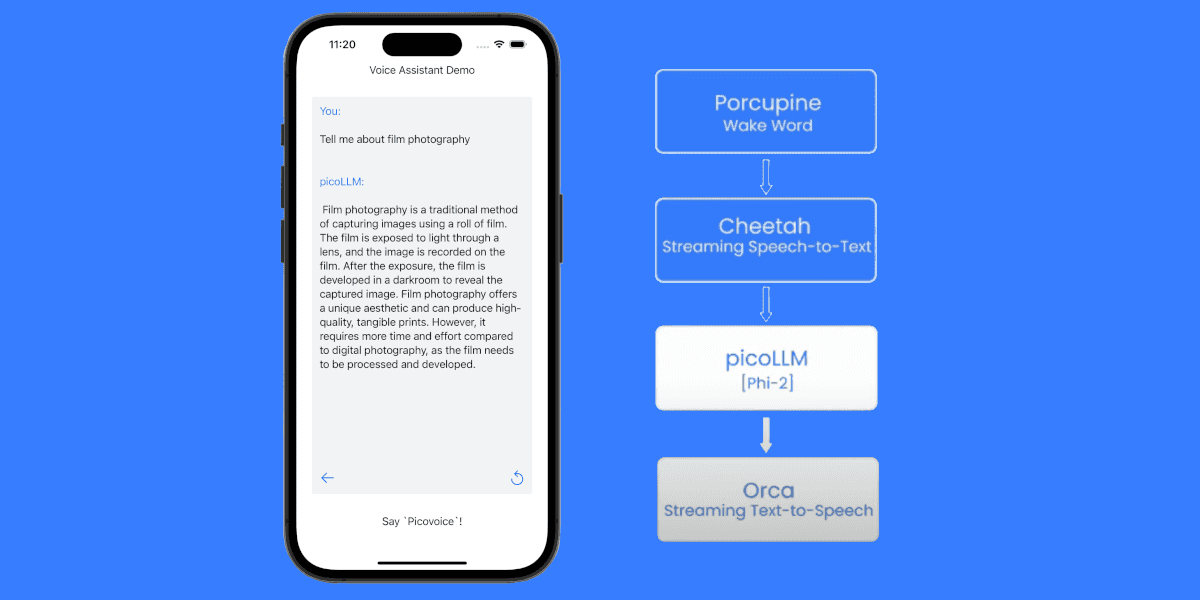

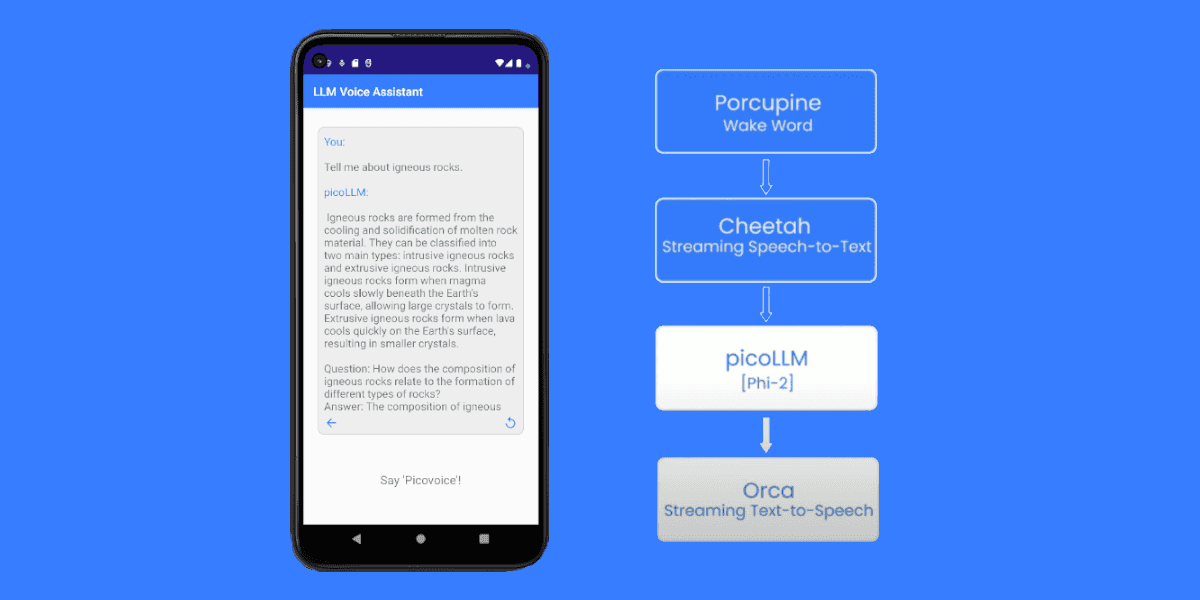

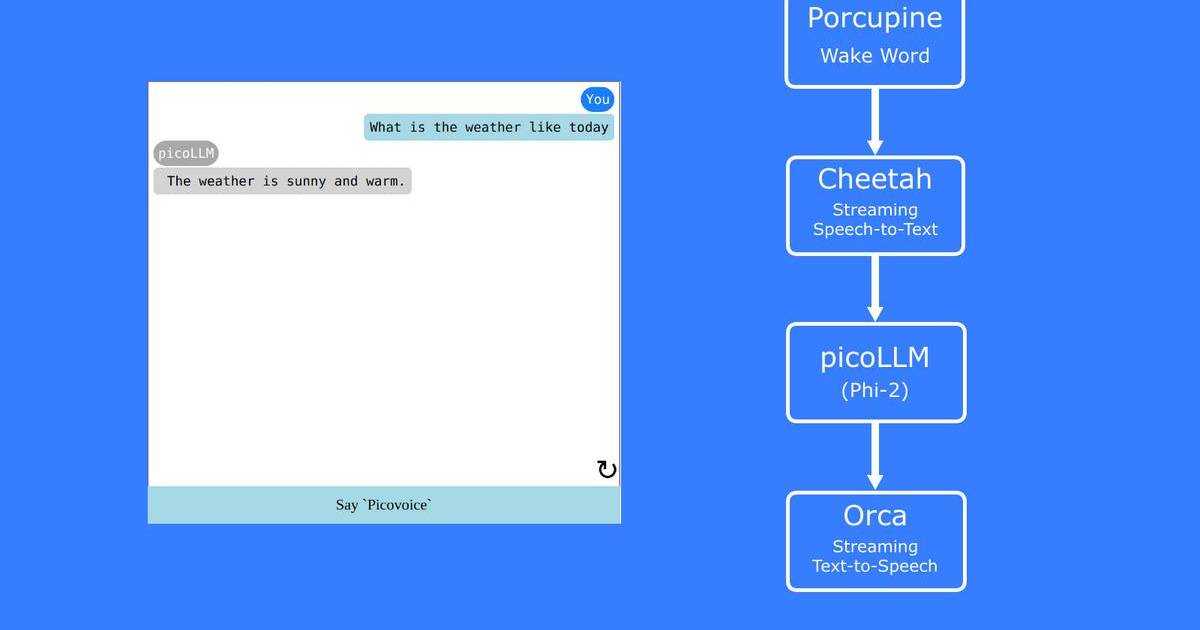

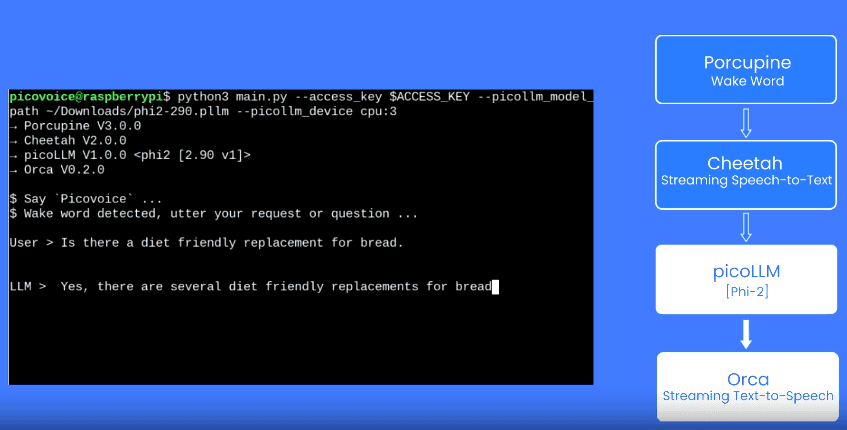

If you're ready to start building, don't forget to check out the pico-cookbook. It has an example of a Voice AI Agent built by using Porcupine Wake Word, Cheetah Streaming Speech-to-Text, picoLLM Inference Engine, and Orca Streaming Text-to-Speech.

Start Free