Voice commands that work in noise and never hallucinate

Noise-robust, deterministic speech-to-intent that fuses ASR and NLU into a single on-device model. Outperforms Google Dialogflow and Amazon Lex. No cloud. No latency.

- DDadMobile

- JDJane DoeMobile

- JSJames SmithWork

- MMomHome

- DPDr. PatelWork

Did not understand the command. Please try again.

Deterministic voice commands for noisy, challenging environments

Most voice command systems run a two-step pipeline: speech-to-text converts audio to a transcript, then a separate NLU model parses that transcript for intent. Every step accumulates error and compounds latency. This puts user experience at risk if the product is intended to work in a factory, on a vehicle, in a hospital, or in a place without reliable connectivity.

Rhino Speech-to-Intent is different. It's an end-to-end speech-to-intent engine with a single model that maps spoken audio directly to a structured intent with typed slot values. If the spoken command doesn't fit the defined context, Rhino returns nothing. No hallucinations. No intermediate transcript. No pipeline.

Product teams define the domain, the commands that Rhino is expected to recognize, and Rhino handles the rest. It recognizes predetermined commands with high accuracy, even in noisy environments with unstable connectivity.

Intent from speech in under 10 lines

A single SDK handles audio processing, model inference, and slot extraction. Rhino Speech-to-Intent provides SDKs for Python, NodeJS, Android, iOS, React, Flutter, React Native, .NET, Java, and C, enabling custom voice commands across embedded, mobile, web, desktop, and server.

Proven accuracy in noise and across accents vs. Google Dialogflow and Amazon Lex

Rhino Speech-to-Intent is benchmarked against Google Dialogflow and Amazon Lex using 600+ real spoken commands from 50+ speakers with different accents, tested across noise levels from 6 to 24 dB SNR.

Why enterprises choose Rhino Speech-to-Intent

Rhino is an enterprise-ready on-device speech-to-intent engine built for applications that require accurate, noise-robust, and deterministic voice command recognition.

isUnderstood: null, not a hallucinated guess. For safety-critical applications like medical devices, industrial equipment, and automotive interfaces, this determinism is non-negotiable.Recipes

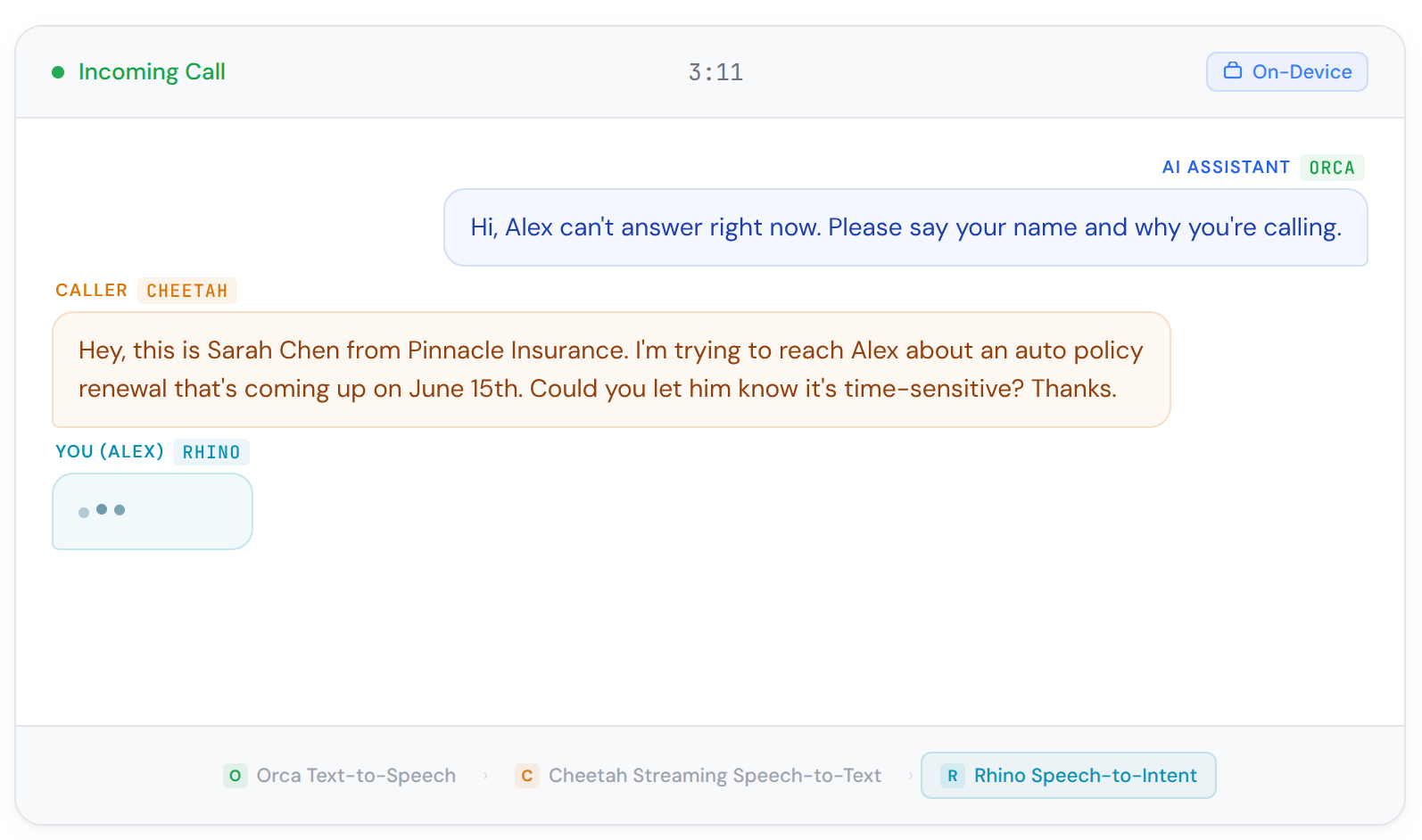

Call Screen

Screen incoming calls with voice activity detection, wake word activation, and text-to-speech responses. Block robocalls and spam without cloud processing.

Ship it.

On device.

Reliable, accurate, and lightweight voice command detection

Common questions about speech-to-intent

- Manufacturing floor voice commands

- Warehouse management systems

- Quality control voice interfaces

- Industrial equipment control

- Medical device voice control

- Patient data entry systems

- Hands-free clinical workflows

- HIPAA-compliant voice interfaces

- Voice-controlled appliances

- Home automation systems

- IoT device integration

- Custom voice assistants and AI agents

- In-vehicle voice commands

- Fleet management systems

- Navigation voice control

- Driver assistance interfaces

Natural language understanding deals with meaning, i.e., comprehending users' intent. Researchers initially started with understanding user intents from the text. While spoken language understanding is a more specific term to refer to understanding user intent from speech, many people, including the industry and researchers, still use natural language understanding for both text and speech data. This is mainly due to the conventional approach of running speech-to-text and natural language understanding engines subsequently.

Intent Detection is a subtask of natural language processing and a critical component of any task-oriented system. Natural language understanding solutions match users' utterances with one of the predefined classes by understanding the user's goal (i.e., intention). After matching utterances with intents, the software can initiate a task to achieve users' goals. For example, users with the intention to turn the lights off may say: "Turn the lights off.", "Switch off the lights.", "Can you please turn the lights off?". Intent detection captures the users' goal: "change the state of the lights from on to off" despite the different ways to communicate it.

Speech-to-text converts spoken audio into a text transcript. Speech-to-intent maps a spoken command directly to a structured intent with typed slot values — no transcript needed. Rhino Speech-to-Intent's end-to-end architecture skips the ASR-then-NLU pipeline entirely, which eliminates error accumulation between steps and significantly improves accuracy in noisy conditions. Learn more about different approaches in Spoken Language Understanding, or why Rhino is a better alternative to speech-to-text while building voice assistants.

Rhino Speech-to-Intent is a more accurate, resource-efficient, and faster alternative to Amazon Lex, Google DialogFlow, or other NLU engines for use-case-specific intent detection. Picovoice's Free Trial allows enterprises to evaluate Rhino Speech-to-Intent and compare it with the alternatives. However, if you're still not sure how to overcome the limitations of Amazon Lex, Google DialogFlow, and other NLU engines with Rhino Speech-to-Intent or need help with migration, Contact sales!

Rhino Speech-to-Intent -as the name suggests, converts speech into intent directly without relying on text, eliminating the need for text representation. Rhino Speech-to-Intent uses the modern end-to-end approach to infer intents and intent details directly from spoken commands. This enables developers to train jointly optimized automatic speech recognition (ASR) and natural language understanding (NLU) engines tailored to their specific domain, achieving higher accuracy.

Rhino Speech-to-Intent excels in use-case-specific applications, such as voice-enabled coffee machines or surgical robots, which involve a limited number of commands, offering high accuracy with minimal resources. In contrast, open-domain applications like voice-enabled ChatGPT handle a wide range of topics and variations. Thus, we recommend Cheetah Streaming Speech-to-Text and picoLLM for such applications.

Intents, expressions, and slots are commonly used in conversational AI and across various engines such as Amazon Lex, IBM Watson, Google Dialogflow, or Rasa NLU. They're used to build voice assistants or bots. You can check out the Rhino Speech-to-Intent Syntax Cheat Sheet to start building or the Picovoice Glossary to learn the terminology.

Yes. Rhino Speech-to-Intent processes all audio on-device. No audio is transmitted, no cloud connection is required, and no third-party data retention occurs.

Picovoice docs, blog, Medium posts, and GitHub are great resources to learn about voice AI, Picovoice technology, and how to start building with voice commands. Enterprise customers get dedicated support specific to their applications from Picovoice Product & Engineering teams. Reach out to your Picovoice contact or talk to sales to discuss support options.

Rhino Speech-to-Intent supports English, French, German, Italian, Japanese, Korean, Chinese (Mandarin), Portuguese, and Spanish.

Contact sales team to get a custom language model trained for your use case.