Highly accurate and efficient voice activity detection

Detect speech instantly with ultra-low latency, 50× fewer false alarms than WebRTC VAD and 12× fewer than Silero, all while using minimal CPU. Lightweight, cross-platform, and enterprise-ready.

The only VAD that is accurate AND lightweight

Cobra Voice Activity Detection (VAD) is software that scans audio streams to identify the presence of human speech in real time, processing audio entirely on the local device.

Cobra is powered by Picovoice's proprietary deep learning stack, trained on thousands of hours of audio across diverse real-world noise conditions. It outputs a continuous voice probability score per audio frame, enabling fine-grained threshold control. Unlike WebRTC VAD, which uses Gaussian Mixture Model (GMM) signal processing from the early 2000s, and Silero VAD, which depends on PyTorch or ONNX Runtime, Cobra uses a proprietary deep neural network built and optimized end-to-end by Picovoice on a custom native inference, offering not only superior performance but also full flexibility.

On-device voice detection in any application

Integrate real-time voice activity detection into your application in less than 10 minutes. Cobra VAD provides native SDKs for Android, C, .NET, iOS, Node.js, Python, and Web across embedded (MCU, MPU), mobile, desktop, server, and web browsers.

Industry-leading Accuracy and Efficiency

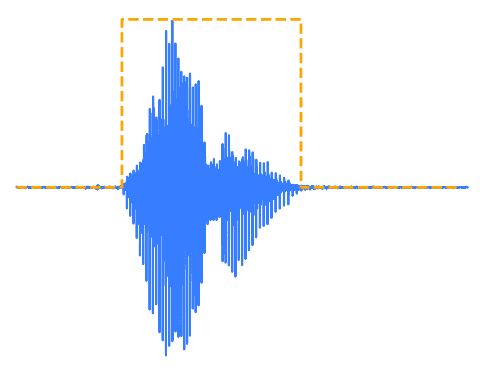

A good VAD catches most speech and does not fire too often on noise, like Silero and WebRTC VAD. A great VAD, like Cobra VAD, means fewer false alarms, so background noise or non-speech is less likely to trigger the detector.

Why enterprises choose Cobra Voice Activity Detection

Cobra Voice Activity Detection is built for production deployments that demand deep learning accuracy, extreme efficiency, and enterprise-grade reliability.

Ship it.

On device.

Fast, accurate, and lightweight voice activity detection

Common questions about voice activity detection

Voice activity detection (VAD) is a technology that identifies the presence or absence of human speech within an audio stream in real time. It is also known as speech activity detection, speech detection, or voice detection.

VAD works by analyzing incoming audio frames and classifying each one as speech or non-speech, either through traditional signal processing techniques like Gaussian Mixture Models (GMM), or through deep learning models that are significantly more accurate in noisy conditions. VAD is a foundational component in voice AI pipelines. It gates speech recognition to reduce unnecessary compute, enables natural turn-taking in voice interfaces, suppresses background processing during silence, and improves the responsiveness of voice assistants, conferencing systems, and embedded audio devices.

The best voice activity detector depends on enterprise's deployment requirements. For enterprise applications that need accuracy, cross-platform support, and production readiness, Cobra VAD is the top choice.

In an open-source benchmark that uses LibriSpeech speech data mixed DEMAND to add noise, Cobra achieves 98.9% true positive rate at 5% false positive rate and 0dB SNR, compared to 87.7% for Silero VAD and 50% for WebRTC VAD. That is 50× fewer false alarms than WebRTC VAD and 12× fewer than Silero. Cobra also runs at RTF 0.037 on a Raspberry Pi Zero (3.7% CPU usage), making it viable on constrained embedded hardware.

Standard VAD detects the presence of any human speech. For speaker identification, you'd need a separate speaker recognition system that can work alongside VAD.

Check out Eagle Speaker Recognition for speaker recognition and voice identification.

Voice Activity Detection (VAD) detects any human speech, while Wake Word Detection listens for specific trigger phrases. VAD can be used to activate software whenever someone speaks, regardless of what is said. In contrast, Wake Word Detection only activates software when a particular word or phrase is spoken.

Check out Porcupine Wake Word for wake word detection and keyword spotting.

Voice ID, also known as voice biometrics or speaker recognition, identifies who is speaking by analyzing the unique vocal characteristics of pre-registered users. In contrast, VAD simply detects whether anyone is speaking, without identifying them.

Check out Eagle Speaker Recognition for speaker recognition and voice identification.

Traditional VAD algorithms, including WebRTC VAD, rely on Gaussian Mixture Models (GMMs), a statistical signal processing technique that struggles with non-stationary noise, babble, and real-world acoustic conditions. Newer open-source alternatives like Silero VAD use deep learning but depend on general-purpose runtimes, PyTorch, ONNX, TensorFlow, that were built for research and server inference, not edge deployment. This limits their accuracy ceiling and creates unnecessary overhead on constrained hardware.

Picovoice builds its entire stack from the ground up. Cobra uses a proprietary neural architecture trained on thousands of hours of diverse audio, with Picovoice owning the full data pipeline, training infrastructure, and runtime implementation. There are no third-party framework dependencies on any platform. Cobra is smaller, faster, and more accurate than the alternatives, proven by the open-source VAD benchmark.

By the numbers: at 5% false positive rate, Cobra achieves 98.9% true positive rate versus 87.7% for Silero and 50% for WebRTC VAD — 12× fewer false alarms than Silero and 50× fewer than WebRTC VAD, while running on Raspberry Pi Zero by using only 3.7% of CPU (RTF 0.037), nearly 11× faster than Silero Python.

Voice activity detection works by analyzing short frames of audio and classifying each frame as containing human speech or not. Traditional approaches like WebRTC VAD use Gaussian Mixture Models (GMM), which analyze acoustic features such as energy levels. This approach is computationally efficient but brittle in noisy conditions: at 5% false positive rate, WebRTC VAD achieves only 50% true positive rate when speech is mixed with real-world noise.

Modern deep learning VADs like Cobra process audio frames through a neural network trained on thousands of hours of speech and noise data, learning noise-robust patterns that GMM-based models cannot capture. At 5% FPR, Cobra achieves 98.9% true positive rate — detecting nearly all speech while generating far fewer false alarms than traditional alternatives.

Cobra processes all audio entirely on the local device — no audio frames are transmitted to Picovoice or any third party at any point. Because the VAD operation is entirely local, no data processing agreement is required for the detection itself, and there is no risk of audio data being intercepted, logged, or exposed through a network dependency. Cobra is compliant with GDPR, HIPAA, and CCPA by architectural property: privacy is enforced by the design of the system, not by contractual overlay. Cobra can be deployed in air-gapped environments, on devices with no internet connectivity, and in regulated industries including healthcare, defence, and finance.

WebRTC VAD is Google's open-source voice activity detector, built on Gaussian Mixture Model (GMM) signal processing. It is lightweight and widely used, but its accuracy degrades significantly in noisy conditions. In an open-source benchmark at 5% false positive rate with speech mixed with real-world noise at 0 dB SNR, Cobra achieves 98.9% true positive rate versus 50% for WebRTC VAD — meaning Cobra produces 50× fewer false alarms at the same operating point. In practical terms: in a 1-hour call with 30 minutes of active speech, WebRTC VAD produces approximately 62 speech cut-offs versus 1–2 for Cobra. Unlike WebRTC VAD, which lacks official SDKs beyond web and C and requires community-maintained wrappers for other platforms, Cobra provides official production-ready SDKs for Android, iOS, Linux, macOS, Windows, Raspberry Pi, and Web. The benchmark code is publicly available at github.com/Picovoice/voice-activity-benchmark.

Silero VAD is an open-source deep learning VAD that outperforms WebRTC VAD, but not Cobra VAD. Cobra outperforms Silero on both accuracy and efficiency. On accuracy: at 5% FPR, Cobra (98.9% TPR) produces 12× fewer false alarms than Silero (87.7% TPR). On efficiency: Cobra Python (RTF 0.00171) is 2.5× more efficient than Silero Python (RTF 0.00429) on an AMD Ryzen 9 5900X; Cobra C (RTF 0.000399) is nearly 11× faster than Silero Python on the same hardware. On a Raspberry Pi Zero, Cobra runs at 3.7% CPU; Silero is impractical on this hardware due to its framework overhead.

Cobra Voice Activity Detection works standalone but also pairs up with other engines and enables several use cases. For example, developers combine Cobra Voice Activity Detection with Rhino Speech-to-Intent for QSR drive-thru voice assistants, with Cheetah Streaming Speech-to-Text for real-time agent coaching, or LLM-powered voice assistants, enabling them to start or stop responding depending on whether human speech or silence is detected, and with Leopard Speech-to-Text for cost-effective audio transcription.

Yes. Cobra can be ported to microcontrollers (MCUs) and custom embedded targets beyond the standard SDK platforms. Cobra VAD's performance on Arm Cortex-M4 MCUs can be evaluated using the official Cobra VAD MCU demo running on Arduino Nano 33 BLE Sense and STM32F411E-DISCO. For other MCUs that need a VAD model trained specifically, you can contact Picovoice Sales to discuss your target platform and engage with Picovoice through an NRE engagement.

Picovoice docs, blog, Medium posts, and GitHub are great resources to learn about voice AI, Picovoice technology, and how to start building voice-activated products. Enterprise customers get dedicated support specific to their applications from Picovoice Product & Engineering teams. Reach out to your Picovoice contact or contact sales to discuss support options.