TLDR: Build a voice survey on web that captures Net Promoter Scores (NPS), multiple-choice selections, and open-ended customer feedback. This tutorial uses client-side spoken language understanding and streaming speech-to-text via WebAssembly to ensure privacy and accuracy without the latency or cost of cloud based LLM APIs.

Build a Voice Survey Web App for Marketing and Customer Feedback

Voice surveys collect spoken responses instead of typed answers, for example, product ratings, satisfaction scores, multiple-choice selections, and open-ended feedback captured through speech. Respondents dictate their answers, select choices, and explain their reasoning out loud, producing richer qualitative data and higher completion rates than text-only surveys. This tutorial builds a voice survey web app that runs entirely in the browser via WebAssembly — audio never leaves the device, keeping all user responses private and secure.

How do Voice Surveys Work?

A voice survey needs to handle two fundamentally different response types: structured answers with known valid values (product ratings, multiple choices, yes/no selections), and open-ended feedback where the user provides detailed reasoning.

Most implementations handle both with a single speech-to-text pipeline, then route the transcript through regex, Natural Language Processing, or an LLM to extract structured values. For the regex/NLP approach, this two-step pipeline has a critical failure mode: the transcription layer doesn't know the valid response options, so it can mishear "four" as "for" or "ate" as "eight". When these pipelines fail silently, they leave behind corrupted data that's difficult to detect or correct after the session ends.

Routing the transcripts through an LLM can resolve these ambiguities from context, but for a survey with a fixed set of valid responses, an LLM adds unnecessary complexity, cost, and latency.

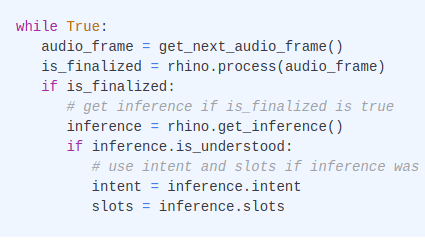

This tutorial replaces that approach with a deterministic architecture: spoken language understanding for structured questions and streaming speech-to-text for open-ended ones. A domain-specific speech-to-intent model matches audio directly against the survey's valid response options i.e., a spoken "four" always returns the integer 4, not the word "for". Unlike the multi-step STT-then-parse pipeline, this architecture is simpler and more reliable. Moreover, there is no intermediate transcription layer to increase the surface area for errors.

What You'll Build:

A customer feedback survey with four question types: an NPS rating (1–5), a multiple-choice satisfaction question, a yes/no question, and an open-ended follow-up. The same architecture extends to event feedback, patient satisfaction, employee engagement, and market research surveys.

What You'll Need:

- Node.js (download page)

- Picovoice

AccessKeyfrom the Picovoice Console - A microphone-equipped laptop or desktop for testing

How to Process Voice Survey Responses Without an LLM

This tutorial uses four on-device voice models sharing a single microphone stream through the Web Voice Processor:

Cobra Voice Activity Detection runs continuously on the microphone stream, tracking voice probability in real time. Rather than keeping all voice models active at once, Cobra acts as the trigger — when it detects speech above the probability threshold, it activates the right model for the question type on screen.

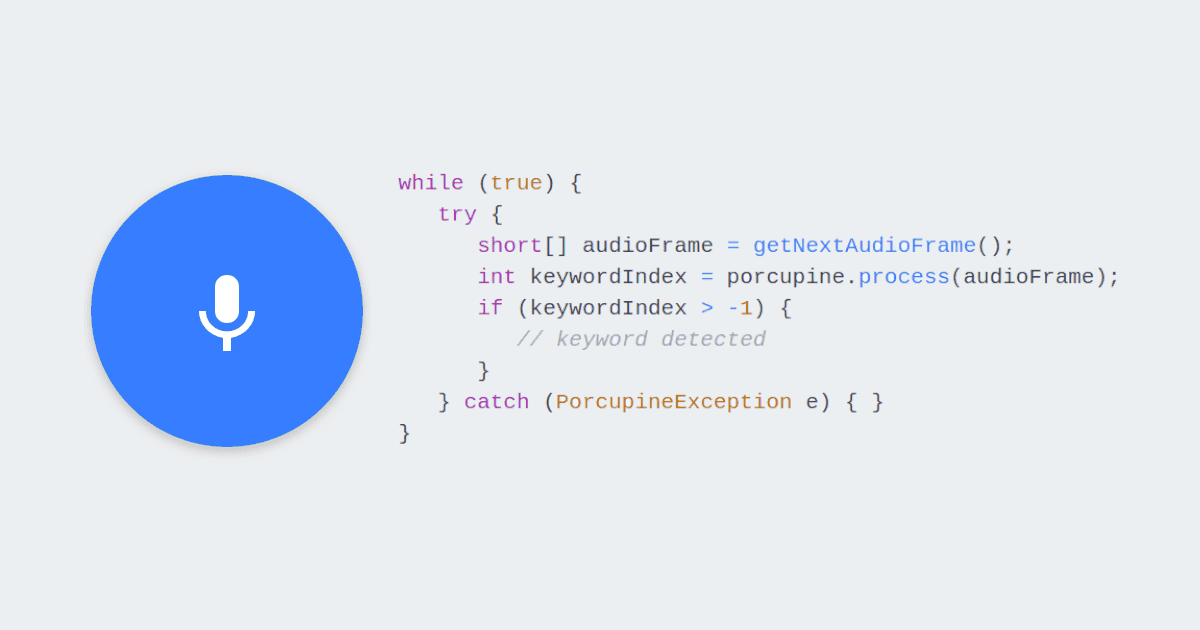

Rhino Speech-to-Intent handles NPS ratings, multiple-choice selections, and yes/no answers. It matches audio directly against a predefined set of valid responses and outputs structured data:

Invalid scores, out-of-range values, and hallucinated choices are structurally impossible — the model either returns a valid response or returns nothing.

Cheetah Streaming Speech-to-Text handles open-ended questions. It transcribes the respondent's speech in real time, displaying words as they speak.

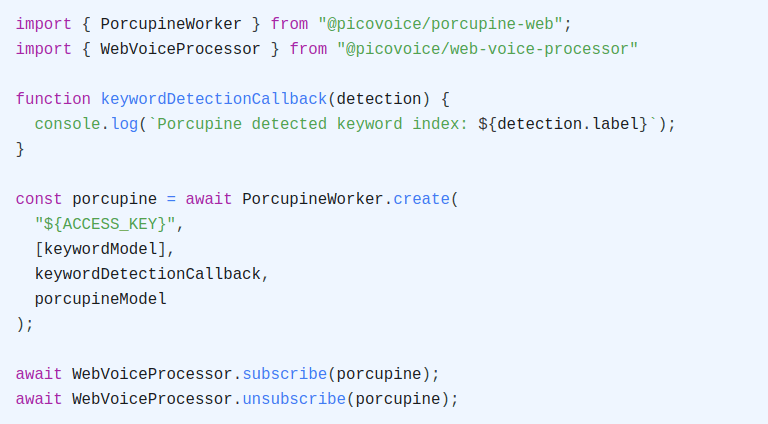

Porcupine Wake Word listens for three keywords or navigation phrases continuously alongside Cobra Voice Activity Detection: "Next Page", "Previous Page", and "Submit Survey".

To go further with the captured responses, e.g., summarizing feedback trends or analyzing responses, a local LLM like picoLLM can process the data without sending it to the cloud.

Set Up the Voice Survey Project

Initialize a new project and install the required packages:

Install the speech SDKs and a local development server:

@picovoice/cobra-web: Voice activity detection model@picovoice/porcupine-web: Keyword detection model for voice control@picovoice/rhino-web: Speech-to-intent model for structured responses@picovoice/cheetah-web: Streaming speech-to-text for open-ended questions@picovoice/web-voice-processor: Shared microphone audio pipelinehttp-server: Local server

Train Custom Keywords for Voice Control

- Sign up for a Picovoice Console account and navigate to the Porcupine page.

- Enter your keyword such as "Submit Survey" and test it using the microphone button.

- Click "Train", select "Web (WASM)" as the target platform, and download the

.ppnmodel file in the project root. - Repeat steps 2 & 3 for additional keywords:

- "Next Page"

- "Previous Page"

For tips on designing effective keywords, review the choosing a wake word guide.

Define Voice Commands for Survey Responses

The speech-to-intent model needs a context that maps spoken phrases to structured data. This section defines valid responses for each structured question in the survey.

- In the Rhino section of Picovoice Console, create a new context for your survey.

- Click the "Import YAML" button in the top-right corner of the Console. Paste the YAML below to add the survey response commands.

- Train the context for the "Web (WASM)" platform and download the

.rhnmodel file.

YAML Context for a Customer Feedback Survey:

This defines three response intents: giveRating for NPS scores, selectChoice for multiple-choice answers, and answerYesNo for binary questions. The giveRating intent uses Rhino's built-in pv.SingleDigitInteger slot, which automatically recognizes spoken numbers one through nine and returns the numeric value (e.g., "four" → "4"). The code validates that only scores between 1 and 5 are accepted.

The bracket syntax handles how people naturally speak in a survey context. "Four out of five", "I'd give it a four", "probably a four", and just "four" all resolve to the same intent with slot value 4. The voice model handles variation deterministically. To support additional phrasings, add more expressions to the YAML and retrain.

Refer to the Rhino Syntax Cheat Sheet for details on expression syntax, optional words, and slot types.

Download Default Voice Models

The web application requires default model files to initialize the specialized voice models locally via WebAssembly. Download the following parameter files and place them in the project root:

- Cobra VAD:

cobra_params.pv - Porcupine Wake Word:

porcupine_params.pv - Rhino Speech-to-Intent:

rhino_params.pv - Cheetah Streaming STT:

cheetah_params.pv

Define Questions for Web Survey

Before building the UI, define the survey as a data structure. Each question specifies its type, the text shown to the respondent, and the response mode — either speech-to-intent for structured answers or speech-to-text for open-ended responses:

This structure makes it easy to add, remove, or reorder questions without changing any voice logic. Questions with responseMode: "intent" use the speech-to-intent model; questions with responseMode: "transcription" use the speech-to-text model. To build a different survey — event feedback, patient satisfaction, market research — update this array and the Rhino YAML context.

Create Voice Survey HTML File

Create an index.html file in the project root. The application loads all SDKs from node_modules:

The survey UI consists of three screens: a start screen with a button to begin, a question screen that renders each question type (rating bar, choice chips, yes/no buttons, transcription text area), and an end screen with a response summary. The complete HTML, CSS, and JavaScript are included at the end of this tutorial.

Add Voice Activity Detection for Automatic Activation

Cobra Voice Activity Detection runs continuously and detects when the user starts speaking. Based on the current question type, it activates either Rhino (for structured questions) or Cheetah (for open-ended questions):

The activateEngineForCurrentQuestion function checks the question's responseMode and subscribes the appropriate voice model:

A threshold of 0.5 in an audio frame provides responsive activation. The keywordCooldownUntil check prevents Cobra Voice Activity Detection from immediately reactivating a model after a keyword is detected.

Add Voice Control to Web Survey

Porcupine Wake Word listens for three navigation keywords continuously alongside Cobra. When a keyword is detected, it triggers the corresponding survey action:

Replace ${NEXT_PAGE_PPN}, ${PREVIOUS_PAGE_PPN}, and ${SUBMIT_SURVEY_PPN} with the filenames of your downloaded .ppn files.

The callback routes each keyword to its survey action. A short cooldown prevents Cobra from immediately reactivating a model after a keyword is processed:

When a keyword is detected while Rhino is active, the callback unsubscribes Rhino first. If detected during transcription, the callback stops Cheetah and cleans any keyword text that may have been transcribed.

Clean Keyword Text from Transcription

Since Porcupine and Cheetah run simultaneously during open-ended questions, Cheetah may transcribe the keyword phrase (e.g., "next page") before Porcupine detects it. The cleanKeywordFromTranscription function strips these phrases from the end of the transcription:

Route Survey Responses with Spoken Language Understanding

When Cobra detects speech on a structured question, Rhino Speech-to-Intent activates and listens for a response. When it recognizes a complete utterance, the callback fires with the inference result:

The handleSurveyIntent function routes each intent to the correct response handler, updating the stored answer and highlighting the selected option in the UI:

For the giveRating intent, the built-in pv.SingleDigitInteger slot returns numeric strings directly — saying "four" produces { score: "4" }. The handler validates that only scores between 1 and 5 are accepted; values outside this range prompt the respondent to try again. Cobra Voice Activity Detection will reactivate Rhino Speech-to-Intent if the respondent speaks again, so they can correct their answer before navigating.

Transcribe Open-Ended Responses in Real Time

When Cobra Voice Activity Detection detects speech on an open-ended question, Cheetah Streaming Speech-to-Text subscribes to the microphone and transcribes the user's speech as they speak:

The callback receives partial transcripts as each audio frame is processed. Words appear in the text area in real time, giving the respondent immediate visual confirmation that their speech is being captured. When Cheetah detects a pause (endpoint), a line break is inserted.

Transcription is stopped and flushed when navigating away from the question:

Replace Placeholders in the Code

Locate and replace the following values in the index.html file:

- AccessKey: Replace

${ACCESS_KEY_HERE}with yourAccessKeyobtained from the Picovoice Console dashboard. - Navigation Keywords: Replace

${NEXT_PAGE_PPN},${PREVIOUS_PAGE_PPN}, and${SUBMIT_SURVEY_PPN}with the exact filenames of your downloaded Porcupine Wake Word.ppnfiles. - Survey Context: Replace

${CONTEXT_FILE_NAME}with the filename of your custom Rhino Speech-to-Intent.rhnfile.

Run the Voice Survey

Your project directory should contain:

Start the local server with the required cross-origin headers:

Open http://localhost:5000 in your browser. Click "Start Survey" to initialize the voice models and begin.

The click interface works in parallel with voice. Every rating, choice, and navigation action is available via mouse or touch. Respondents can mix both — speak a rating, then click "Next Page."

Complete Voice Survey Code

Here is the complete index.html with all HTML, CSS, and JavaScript:

Adapt the Survey for Different Use Cases

The data-driven question structure makes it straightforward to adapt this survey for different marketing and feedback scenarios. Update the SURVEY_QUESTIONS array and the Rhino YAML context to match your domain.

- Event Feedback: Replace the questions with event-specific prompts — "How would you rate today's event?", "Which session was most valuable?", "What topics would you like to see next time?" Add session names as slot values so respondents can reference specific sessions by voice.

- Patient Satisfaction: In healthcare settings, on-device processing simplifies compliance. No patient audio leaves the device. Questions can include satisfaction with wait times, care quality, and staff communication. The open-ended follow-up captures qualitative feedback that structured scales miss.

- In-Product Feedback: Embed the survey inside a web application triggered after a user completes a workflow. Voice captures the responses faster than typing, and all processing stays client-side.

- Kiosk / Point-of-Sale: A tablet at checkout asks three quick questions after purchase. On-device processing means the terminal works without reliable WiFi.

For each use case, the changes are the same: update the questions array, update the YAML with new intents and slots, retrain the Rhino Speech-to-Intent context, and add handling for any new intent names in the handleSurveyIntent function.