Android speech recognition is the process of converting spoken audio captured on an Android device into text, structured intents, or keyword triggers — either on-device or via a cloud API. From voice-controlled smart home apps and hands-free fitness trackers to voice-powered field inspection tools and medical dictation software, Android powers voice-enabled applications across consumer and enterprise use-cases. Building voice features into an Android app means choosing between fundamentally different technologies, as wake word detection, voice activity detection, intent recognition, real-time speech-to-text, and batch transcription all solve distinct problems.

This complete guide covers five Android speech recognition technologies with working Java code, compares the available Android speech recognition libraries in 2026, and walks through building a privacy-first, on-device, low-latency Android voice assistant.

Why on-device speech recognition matters:

- Privacy and Compliance: Cloud processing transmits every voice sample to external servers, placing audio data outside the app's control and into third-party infrastructure. For healthcare voice applications with HIPAA compliance, or any app subject to GDPR or CCPA, on-device processing keeps voice data local and compliant by design.

- No Network Latency: Cloud-based voice AI adds a network round-trip at every stage of the pipeline, introducing 50 to 300ms of delay under good conditions and about 2000ms on poor connections that compounds across the voice AI pipeline. On-device processing eliminates network latency entirely, delivering a low-latency performance across all voice AI applications.

- Reliable Speech Recognition in Any Environment: Cloud-based speech recognition performance fluctuates with network conditions, introducing variable latency and degraded quality in low-connectivity environments. On-device processing delivers consistent, predictable performance regardless of network quality.

For a deeper dive into on-device vs. cloud speech recognition trade-offs, see The Case for Voice AI on the Edge and On-Device Speech Recognition.

5 Major Voice AI Technologies for Android

There are five major types of Android speech recognition: wake word detection, voice activity detection (VAD), intent recognition, real-time speech-to-text, and batch transcription. Each serves a distinct role in a voice AI pipeline:

- Detect when a user is speaking -> Voice Activity Detection

- Recognize specific trigger phrases or words -> Wake Word Detection

- Understand voice commands and extract structured intent -> Intent Recognition

- Transcribe speech to text in real time -> Streaming Speech-to-Text

- Batch transcription of large volumes of audio data -> Batch Speech-to-Text

Wake Word Detection for Android

Wake word detection on Android listens continuously for a specific trigger phrase and activates the Android app only when detected, enabling hands-free interaction without a button press or screen tap. It's the entry point for voice assistants, accessibility apps, and IoT controllers.

Wake Word Detection Libraries for Android: Porcupine Wake Word, custom TensorFlow Lite models

See the complete guide to wake word detection and how to benchmark wake word engines before choosing one.

Voice Activity Detection for Android

Voice Activity Detection (VAD) on Android classifies audio frames as speech or non-speech in real time, without transcribing content. VAD can be used to filter silence in Android voice pipelines or trigger downstream processing only when speech is present, avoiding unnecessary computation during silence. It's typically a lightweight first stage in any Android voice pipeline.

VAD Libraries for Android: Cobra Voice Activity Detection, WebRTC VAD (requires Java Native Interface integration)

Intent Recognition for Android

Intent recognition on Android processes spoken audio and returns the user's intent and associated parameters. It can be used for in-car Android voice assistants, smart appliance apps, and industrial Android voice apps where the application needs to act on what was said rather than transcribe it. Speech-to-intent engines infer intent directly from audio, while Automatic Speech Recognition and Natural Language Understanding pipelines transcribe speech to text first before extracting intent.

Speech-to-Intent Libraries for Android: Rhino Speech-to-Intent, Amazon Lex, Google Dialogflow (with REST API)

For more information, compare voice command acceptance rates for Google Dialogflow, Amazon Lex, and Rhino Speech to Intent, and see the differences between conventional and end-to-end spoken language understanding.

Streaming Speech-to-Text for Android

Streaming speech-to-text on Android transcribes audio continuously as the user speaks, returning partial transcripts before the utterance is complete. It can be used for Android voice assistants, live captioning, and dictation software where immediate text output is required. Unlike batch transcription, text output is returned incrementally rather than after the full recording ends.

Streaming STT Libraries for Android: Cheetah Streaming Speech-to-Text, Amazon Transcribe Streaming, Google Speech-to-Text Streaming (with REST API), Azure Real-Time STT

Evaluate streaming STT engines on Word Accuracy, Punctuation Accuracy, and Word Emission Latency before choosing one.

Batch Transcription for Android

Batch speech-to-text on Android processes a complete audio file and returns a full transcript. It can be used for Android voice memo apps, meeting recordings, and field inspection apps where turnaround time matters less than transcription completeness. Unlike streaming STT, the entire audio context is available during inference.

Batch STT Libraries for Android: Leopard Speech-to-Text, Amazon Transcribe, Google Speech-to-Text (with REST API), Azure Speech

Compare batch STT accuracy across major engines on standardized datasets.

Android for Voice Applications: Strengths and Limitations

Android is one of the most widely deployed mobile platforms in the world, with more than 3 billion active devices spanning phones, tablets, kiosks, industrial handhelds, and embedded systems. This makes it a strong target for voice AI development, but there are real trade-offs to understand before building.

Advantages of Building Android Voice Applications

- Massive device reach:

Androidruns on billions of devices across consumer and enterprise markets, from flagship phones to low-cost field terminals. - Open ecosystem: Unlike iOS,

Androidgives developers direct access to raw audio input, background services, and system-level permissions, which makes always-on voice features like wake word detection more feasible to implement. - Cross-manufacturer deployment: A single

AndroidAPK can target devices across manufacturers and purpose-built industrial hardware without platform-specific rewrites. - Flexible audio pipeline:

Android'sAudioRecordAPI provides direct access to PCM audio frames, enabling integration with on-device voice AI engines or custom audio processing pipelines.

Challenges of Building Android Voice Applications

- Device fragmentation:

Androidruns on thousands of hardware configurations. Audio input quality, microphone placement, and background processing behavior can vary significantly across manufacturers and API levels. - Background processing restrictions:

Androidcan limit background execution to preserve battery life. Always-on listening onAndroidrequires a foreground service with a persistent notification, which can add implementation complexity with some speech recognition models. - Runtime permissions: Since

Android6.0 (API 23), microphone access requires explicit runtime permission requests. Handling permission denial and re-request flows adds extra work compared to declaring permissions in a manifest alone. - Manufacturer customization: Some

AndroidOEMs may apply battery optimization that can interrupt audio capture during extended sessions. This requires explicit handling for affected devices.

How to Build a Voice AI App on Android: 3 Architectural Decisions

Before choosing a library or writing code, Android developers can make three architectural decisions that shape every downstream implementation choice. These decisions are specific to Android's runtime model and apply regardless of which voice AI engine the app uses.

1. Foreground Service vs Activity-Scoped Voice AI

Voice AI on Android runs in one of two lifecycle models, and the decision dictates background behavior, battery impact, and manifest configuration.

Activity-scoped voice AI starts and stops with a user-visible screen. The engine runs inside an Activity or Fragment, audio capture halts when the user navigates away, and no persistent notification is required. This is the right choice for dictation fields, voice memo recorders, in-app voice search, and any feature that only needs to listen while the user is actively engaged. Implementation is simpler, battery impact is bounded to active use, and Android's background restrictions do not apply.

Foreground-service voice AI runs inside a Service with a persistent notification visible to the user. Audio capture continues when the app is in the background. Android 10 (API 29) introduced the foregroundServiceType attribute, and Android 14 (API 34) made declaring it mandatory — services that use the microphone must specify foregroundServiceType="microphone" in AndroidManifest.xml and request the FOREGROUND_SERVICE_MICROPHONE permission. Android 12 (API 31) also restricts starting a foreground service while the app is in the background, except under specific exemptions. This is the right choice for wake word detection, accessibility apps, and always-listening features.

Many apps use both: a foreground service runs wake word detection continuously, and an Activity takes over for transcription once the wake word fires.

2. Single-Engine vs Chained-Pipeline Architecture

A single-engine

Androidapp routes audio from the microphone to one voice AI engine directly: batch speech-to-text for voice memos, streaming speech-to-text for a dictation field, or wake word detection for hands-free activation. This pattern covers most voice features added to existing apps.A chained-pipeline

Androidapp routes audio through multiple engines where each engine's output controls whether the next runs. A typical always-listening voice assistant chains wake word detection → voice activity detection → intent recognition or streaming speech-to-text.

All Picovoice engines share the same 16 kHz sample rate and 512-sample frame length, which means one VoiceProcessor instance can feed every engine in the chain without re-sampling or opening a second microphone session. The state machine that routes frames between engines lives in application code, not in the SDK.

3. Permission Request Timing

Android 6.0+ (API 23+) requires runtime permission for RECORD_AUDIO, and the architectural decision is when in the user flow to request it. The timing affects both conversion and the app's permission retention across sessions.

Request at app launch guarantees the permission is available before any feature needs it, but increases the chance of denial because the user has no context for why the app wants microphone access. Denial here blocks every voice feature in the app.

Request at point of use surfaces the permission dialog the first time the user taps a voice feature. The user has clear context for the request, which improves grant rates significantly, but requires the app to handle the pre-permission state gracefully for every voice feature independently.

Request with rationale shows a custom explanation screen before triggering the system permission dialog. This is the highest-converting pattern for voice apps where microphone access is central to the product.

- For apps where voice AI is the primary interaction model, request at app launch with a rationale screen.

- For apps where voice is one of many features, request at point of use.

- Regardless of timing, the app must handle permission denial at runtime. Assuming a granted permission from a previous session can crash the voice pipeline.

Android Speech Recognition Libraries and APIs in 2026

Android developers have multiple speech recognition options, each with different trade-offs in capability, privacy, connectivity, and customization.

Native Android Speech Options

SpeechRecognizerAPI isAndroid'sbuilt-in speech recognition interface, available since API 8. It uses the device's default recognition engine (typically Google's, though OEMs may replace it), so behavior can vary and may run on-device or in the cloud. It is designed for real-time microphone transcription and does not support developer-controlled VAD, wake word detection, custom models, batch transcription, or intent recognition. Starting inAndroid13 (API 33),createOnDeviceSpeechRecognizer()provides the same interface but forces on-device recognition and fails if no compatible local engine is available.ML Kit GenAI Speech Recognition is Google's on-device speech API powered by Gemini Nano via

AndroidAICore, available in alpha as of 2026. It requires API level 26 or higher to integrate. Basic mode, which uses the traditional on-device model, is available on most devices with API level 31 and higher. Advanced mode, which uses the Gemini Nano model for broader language coverage, is currently limited to Pixel 10 devices. Both modes support streaming transcription from microphone or audio file input and run on-device. The API is currently in alpha and is not recommended for production use, and may change or be deprecated without notice.

Cloud-Based Android Speech Recognition Options

Amazon Transcribe offers two options for

Android: anAndroidAAR (com.amazonaws:aws-android-sdk-transcribe) for batch transcription of audio stored in Amazon S3, and a Java SDK (software.amazon.awssdk:transcribestreaming) for real-time streaming via HTTP/2 that is not purpose-built forAndroidand requires developers to implement audio capture and integration.Google Cloud Speech-to-Text is accessible on

Androidvia direct REST API calls — the Java client library is not designed forAndroidenvironments. It supports streaming and batch transcription of audio, but requires developers to implement authentication and audio capture.Microsoft Azure Speech SDK provides an official

AndroidAAR (com.microsoft.cognitiveservices.speech) with Java support from API 26. It supports real-time speech-to-text and batch transcription, along with custom keyword recognition trained via Azure Speech Studio. Keyword detection runs on-device, while speech-to-text processing occurs in the cloud. Voice activity detection is available as part of audio input processing but is not a standalone API.Amazon Lex is Amazon's cloud-based conversational AI service for intent recognition and slot extraction from text or speech. Lex V2 is accessible on

Androidvia AWS Amplify or direct REST API calls. It is not a purpose-builtAndroidlibrary and does not include built-in audio capture utilities.Google Dialogflow is Google's cloud-based natural language understanding service for intent recognition and entity extraction from text or audio input. It is accessible on

Androidvia direct REST API calls since the Java client library explicitly does not supportAndroid. It does not include built-in audio capture utilities.Azure Conversational Language Understanding is Microsoft's cloud-based intent recognition service, replacing the retired LUIS. It is accessible on

Androidvia REST API and is typically used downstream of the Azure Speech SDK for speech-to-intent pipelines.

On-Device Android Speech Recognition Options

WebRTC VAD is Google's open-source voice activity detection library, widely used in communication applications. It is implemented in C and has no official

AndroidSDK — integration typically requires manual Java Native Interface (JNI) bindings or third-party wrappers.Vosk is an open-source speech recognition toolkit with an official

AndroidAAR on Maven Central (com.alphacephei:vosk-android). It supports 20+ languages, runs offline, includes basic voice activity detection, and supports streaming transcription. Wake word detection and intent recognition are not supported. Its accuracy is generally lower than cloud alternatives on standard benchmarks, and the project has limited active maintenance compared to commercial options.Picovoice is the only widely available comprehensive, on-device voice AI platform with official

Androidsupport across all major speech recognition capabilities. All engines ship as official Maven dependencies and process audio entirely on-device. Wake word detection, voice command recognition, and speech-to-text models can be customized via Picovoice Console without any ML expertise.- Porcupine Wake Word: Custom wake word detection

- Cobra Voice Activity Detection: Voice activity detection

- Rhino Speech-to-Intent: Voice command recognition

- Cheetah Streaming Speech-to-Text: Real-time transcription

- Leopard Speech-to-Text: Batch transcription

The rest of this guide covers how to integrate each Picovoice speech recognition engine into an Android app using Java, starting with project setup.

Android Project Setup for Speech Recognition

Before integrating any Picovoice engine, ensure the project meets the following requirements:

Android5.0+ (API 21+)- A Picovoice

AccessKey— sign up for a Free Trial via the Picovoice Console to get one

To add the Picovoice SDKs to your project, include mavenCentral() in your top-level build.gradle:

All real-time Picovoice engines capture microphone audio via the Android Voice Processor library. Add it to your app's build.gradle:

And add microphone permission to your AndroidManifest.xml:

How to Add Speech Recognition to an Android App: Code Examples

Adding Voice Activity Detection to Android Apps

Best for: Voice pipelines where processing should only run when speech is present, voice assistants, always-on apps, battery-sensitive Android deployments

Why developers choose it: On Android, continuous audio capture can drain battery and wastes compute. Cobra VAD detects the presence of human speech in real time. It is useful for gating downstream engines like wake word, STT, or intent recognition, or for filtering silence and controlling recording start and stop in any app. Cobra Voice Activity Detection achieves this with a real time factor as low as 0.0039, with twice the accuracy of WebRTC VAD.

- Add the Cobra Voice Activity Detection dependency:

- Create an instance of the VAD engine:

- Pass audio frames to the

.processfunction:

Full Code for Android Voice Activity Detection

For further details, visit the Cobra Voice Activity Detection product page or refer to Cobra's Android SDK quick start guide.

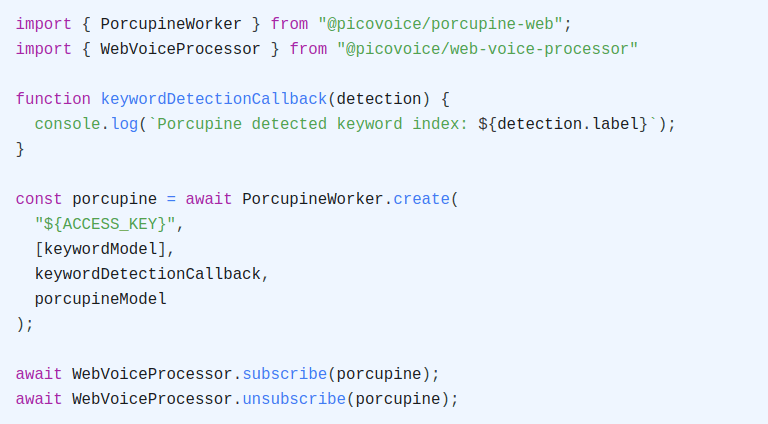

Adding Wake Word Detection to Android Apps

Best for: Always-on listening in Android foreground services, hands-free app activation, keyword spotting, replacing push-to-talk in field and industrial apps

Why developers choose it: Android's background processing restrictions mean always-on listening must run inside a foreground service. Porcupine Wake Word's lightweight footprint makes it suitable for continuous background listening on Android without significant battery impact. Because wake word detection runs fully on-device, audio stays local even when the feature is always listening — a privacy-first default that cloud wake word APIs cannot match.

Picovoice also offers a PorcupineManager high-level wrapper that handles audio capture internally; this guide uses the low-level Porcupine Wake Word API with VoiceProcessor for a consistent pattern across all five engines.

- Add the Porcupine Wake Word dependency:

Train a custom wake word model using Picovoice Console, download the

.ppnfile, and copy it into your Android assets folder (${ANDROID_APP}/src/main/assets).Initialize the wake word engine:

- Detect the keyword by passing audio frames to

.process:

Full Code for Android Wake Word Detection

For further details, visit the Porcupine Wake Word product page or refer to Porcupine's Android SDK quick start guide.

Adding Intent Recognition to Android Apps

Best for: Android apps to power voice control, application navigation e.g. smart home controllers, in-car interfaces, industrial handhelds, kiosk applications

Why developers choose it: Rhino Speech-to-Intent infers intent and slot values directly from audio in a single pass, avoiding the speech-to-text + natural language understanding two-step approach that can compound latency and error rates. It achieves 97% accuracy in noisy environments and a 20% higher command acceptance rate than Google Dialogflow. Because Rhino runs on-device, spoken commands and inferred intents never leave the user's phone — a privacy-first design critical for in-car assistants, medical devices, and industrial apps handling sensitive data.

Picovoice also offers a RhinoManager high-level wrapper that handles audio capture internally; this guide uses the low-level Rhino Speech-to-Intent API with VoiceProcessor for a consistent pattern across all five engines.

- Add the Rhino Speech-to-Intent dependency:

Train a custom context model using Picovoice Console, download the

.rhnfile, and copy it into your Android assets folder.Initialize the Speech-to-Intent engine:

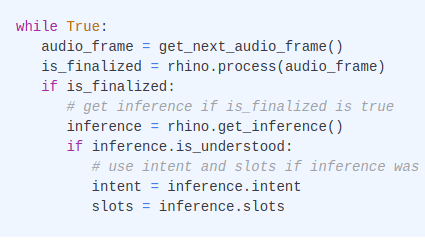

- Infer the user's intent:

Full Code for Android Intent Recognition

For further details, visit the Rhino Speech-to-Intent product page or refer to Rhino's Android SDK quick start guide.

Adding Real-Time Transcription to Android Apps

Best for: Live captions, voice input fields, Android voice assistants, agentic AI apps that respond to spoken input in real time

Why developers choose it: Cheetah Streaming Speech-to-Text runs entirely on-device, eliminating the network round-trip that makes cloud STT latency unpredictable. Cheetah Streaming Speech-to-Text outperforms Google Streaming Speech-to-Text on word accuracy, punctuation accuracy and word emission latency — all while running locally.

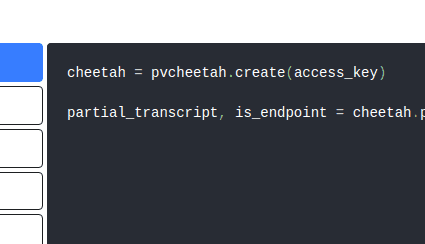

- Add the Cheetah Speech-to-Text dependency:

Download the

.pvlanguage model file from the Cheetah GitHub repository and copy it into your Android assets folder.Initialize the streaming speech-to-text engine:

- Transcribe speech in real time:

Full Code for Android Real-Time Transcription

For further details, visit the Cheetah Streaming Speech-to-Text product page or refer to Cheetah's Android SDK quick start guide.

Adding Batch Transcription to Android Apps

Best for: Transcribing audio recordings stored on the device, voice memos, recorded calls, field inspection audio, meeting recordings.

Why developers choose it: When audio is captured first and transcribed after the fact. Leopard Speech-to-Text processes audio files entirely on-device without sending data to a server, making it suitable for offline transcription of voice memos, recorded calls, and field inspection audio in environments with poor or no connectivity. Leopard Speech-to-Text achieves a 9.7% Word Error Rate, outperforming Whisper Tiny and Whisper Base on accuracy while running entirely on-device.

- Add the Leopard Speech-to-Text dependency:

Download the

.pvlanguage model file from the Leopard GitHub repository and copy it into your Android assets folder.Create an instance of Leopard:

- Transcribe an audio file:

Full Code for Android Batch Transcription

For further details, visit the Leopard Speech-to-Text product page or refer to Leopard's Android SDK quick start guide.

Building a Complete Voice Assistant for Android

With all components in place, you can combine them into a complete voice pipeline:

Example command: "Hey assistant, leave a message" → "I'm running a little late, start without me"

- Porcupine Wake Word detects "Hey assistant"

- Cobra VAD confirms speech is present before activating downstream engines

- Rhino Speech-to-Intent interprets "leave a message"

- Cheetah Streaming Speech-to-Text transcribes "I'm running a little late, start without me."

The app executes the recognized action. This Android Voice Assistant runs fully on-device with no cloud processing for voice data. It is ideal for privacy-sensitive and low-latency applications.

Common Issues and Solutions

Model File Not Found

Android Studio may strip unrecognized file types during build, causing the voice AI models to fail at initialization. Add the following to build.gradle to prevent this:

Too Many False Activations or Missed Detections

Porcupine Wake Word and Rhino Speech-to-Intent both expose a sensitivity parameter between 0 and 1. A higher value reduces missed detections at the cost of more false activations — a lower value does the opposite. Adjust sensitivity to match your deployment environment and test against real-world conditions.

Poor Transcription Accuracy for Domain-Specific Terms

Cheetah Streaming Speech-to-Text and Leopard Speech-to-Text support custom vocabulary for domain-specific terms not covered by the default model. Add medical, legal, industrial, or branded vocabulary via Picovoice Console without any ML expertise. All Picovoice engines require 16kHz mono PCM audio — use the Android Voice Processor (ai.picovoice:android-voice-processor) to capture audio at the correct sample rate, or downsample manually if using a custom audio pipeline.

AccessKey and Resource Errors

- Confirm the

AccessKeyis valid from the Picovoice Console - Pass Application Context rather than Activity context to avoid resource release during Activity lifecycle events

- Call

.delete()on all engine instances when done. Failing to release resources can cause initialization errors on subsequent calls

Preparing an Android Voice App for Production

Before shipping a voice-enabled Android app, validate performance and reliability in conditions that reflect real deployment. Review this checklist before releasing:

Engine Lifecycle

- Call

.delete()on all engine instances when done. Picovoice engines allocate native resources that are not released by the garbage collector - Stop engines when continuous listening is not required to free resources and reduce battery consumption

- Initialize engines once and reuse them across sessions rather than creating and destroying instances repeatedly

Permissions

- Request

RECORD_AUDIOpermission at the point of use, not just on app launch - Handle the case where the user denies permission and provide a clear rationale before re-requesting

- On

Android6.0+ (API 23+), never assume permission is granted without checking at runtime

Accuracy and Environment Testing

- Test in noise conditions representative of your deployment environment — Picovoice recommends testing in your target environment using available sample models, as performance depends on speaker distance, noise level and type, room acoustics, and microphone quality

- Use Picovoice's open-source benchmarks to make data-driven decisions about engine accuracy before shipping

- Tune sensitivity on Porcupine Wake Word and Rhino Speech-to-Intent to match your deployment environment. A higher value reduces missed detections at the cost of more false activations

Background and Battery

- Run always-on engines such as Porcupine Wake Word inside a foreground service with a persistent notification

- Test background behavior on your specific target devices and

Androidversions.AndroidOS background processing restrictions vary across manufacturers and API levels

Audio Input

- Use the Android Voice Processor (

ai.picovoice:android-voice-processor) to capture audio at the correct 16kHz sample rate required by all Picovoice engines - Test with the microphone hardware representative of your deployment. Built-in phone microphones, headsets, and external microphones can produce significantly different results

Android Speech Recognition Use Cases

On-device voice AI on Android enables privacy-first voice applications by removing the constraints of cloud dependency and delivers consistent latency regardless of connectivity. Common Android voice AI applications include:

- On-device voice assistants and AI agents — combine Porcupine Wake Word, Cheetah Streaming Speech-to-Text, and picoLLM (Picovoice's on-device LLM inference engine) to run a fully offline, LLM-powered voice assistant entirely on an

Androiddevice - Voice-controlled smart home and IoT apps — use Rhino Speech-to-Intent to map spoken commands directly to structured actions without a cloud NLU step, ideal for always-on home automation and IoT controllers

- Hands-free field service and warehouse tools — use Porcupine Wake Word and Rhino Speech-to-Intent on industrial

Androidhandhelds for voice picking, inspection logging, and maintenance workflows with custom vocabulary trained for domain-specific terms - Medical and compliance-sensitive apps — on-device processing keeps audio local and supports HIPAA and GDPR compliance by design; pair Cheetah Streaming Speech-to-Text with a custom medical vocabulary for clinical dictation

- Accessibility apps — combine Cobra Voice Activity Detection and Cheetah Streaming Speech-to-Text to build always-listening, low-latency voice input for users who rely on hands-free interaction

- Voice search and kiosk navigation — use Rhino Speech-to-Intent to convert spoken queries into structured outputs that integrate directly with search engines, databases, or kiosk navigation flows

Resources

Documentation

- Cobra VAD Android Quick Start

- Cobra VAD Android API Documentation

- Porcupine Wake Word Android Quick Start

- Porcupine Wake Word Android API Documentation

- Rhino Speech-to-Intent Android Quick Start

- Rhino Speech-to-Intent Android API Documentation

- Cheetah Streaming Speech-to-Text Android Quick Start

- Cheetah Streaming Speech-to-Text Android API Documentation

- Leopard Speech-to-Text Android Quick Start

- Leopard Speech-to-Text Android API Documentation

Demos

- Official Android Wake Word Demo

- Official Android Speech-to-Intent Demo

- Official Android Streaming Speech-to-Text Demo

- Official Android Speech-to-Text Demo

- Official Android Voice Activity Detection Demo

Conclusion

Building voice features into an Android app means navigating fragmented APIs, device-dependent behavior, and background processing restrictions that vary across manufacturers and API levels. The right foundation makes the difference between a reliable voice experience and one that breaks in the field.

Key takeaways:

- Wake word detection, voice activity detection, speech-to-intent, streaming speech-to-text, and batch speech-to-text each solve a distinct problem — pick the right one for the job

- Always-on listening on

Androidrequires a foreground service and engine footprint matters for battery life and background reliability - On-device processing delivers a privacy-first voice AI architecture with consistent low latency and offline reliability regardless of network conditions

- Custom wake word, voice command, and speech-to-text models can be trained via Picovoice Console without any ML expertise

Request a Free Trial to start building.

Start Free