iOS speech recognition is the set of frameworks, SDKs, and APIs that let apps interpret spoken audio on iOS devices, whether that means transcribing it to text, mapping it to structured commands, or spotting a specific wake phrase. Voice-enabled iOS apps span a wide range of use cases like clinical documentation, hands-free field inspection apps, voice-controlled appliance apps, accessibility features, and on-device voice assistants, and each demands a different capability from the speech recognition stack.

The landscape of available options spans Apple's native SFSpeechRecognizer, the newer SpeechAnalyzer in iOS 26, cloud services, and on-device SDKs. Apple's native APIs cover part of the stack, but leave meaningful gaps for production voice apps. Cloud services work across the installed base but introduce network latency on top of inference time and route every voice sample through external servers. For apps subject to HIPAA, GDPR, or CCPA, sending audio to external servers creates compliance risk. On-device processing sidesteps both: audio stays local, latency stays predictable, and the app keeps working when the network does not.

This guide covers the complete iOS speech recognition SDK landscape in 2026, including Apple's native APIs, cloud options, and on-device alternatives, with working Swift code for wake word detection, voice activity detection, intent recognition, real-time transcription, and batch transcription.

Building for Android instead? See the complete guide for Android speech recognition. For cross-platform development, see the React Native speech recognition guide.

What Does Apple's iOS Speech Recognition Support in 2026?

Apple has shipped two speech recognition APIs. SFSpeechRecognizer, introduced in iOS 10, wraps Apple's on-device and cloud ASR models and has been the standard for speech-to-text in iOS apps for nearly a decade. SpeechAnalyzer, introduced in iOS 26, replaces SFSpeechRecognizer with a modular, concurrency-native Swift API that runs fully on-device.

Neither API supports custom wake word detection, standalone voice activity detection, or intent recognition. SFSpeechRecognizer imposes a hard one-minute limit per recognition session and a rate limit of 1,000 requests per device per hour. SpeechAnalyzer removes the session time limit but is iOS 26 only. It is not backward compatible with the installed base. Both require NSSpeechRecognitionUsageDescription in Info.plist in addition to microphone permission, adding a second user permission prompt.

For apps that need wake word detection, voice commands, VAD, or transcription beyond one minute, the native APIs are a starting point for understanding the landscape, not a complete solution.

Why On-Device iOS Speech Recognition Matters for Production Apps

On-device speech recognition processes audio locally on iOS. Cloud speech recognition streams audio to a remote server for inference. The difference shows up in a few concrete ways:

- Latency: Cloud APIs add 50–500 ms of network round-trip on top of inference time under good conditions, and about 2000 ms on poor connections. Cold starts can delay the first transcription result by 1–3 seconds after idle periods.

- Data exposure: Audio never leaves the device, so there are no servers to breach, no retention policies to audit, and no voice data feeding external ML training.

- Compliance: Keeping PHI and personally identifiable voice data local removes Business Associate Agreements, cross-border transfer provisions, and data processing addenda from scope. See HIPAA-compliant voice tech and GDPR and CCPA for voice for details.

- Offline reliability: Field inspection apps, in-vehicle assistants, and clinical tools work the same in airplane mode, cellular dead zones, and behind restrictive corporate firewalls.

5 Major Types of iOS Speech Recognition

There are five major types of iOS speech recognition options: wake word detection, voice activity detection (VAD), intent recognition, real-time speech-to-text, and batch transcription. Each handles a different stage of the voice pipeline, from detecting a trigger phrase to returning a full transcript.

Wake Word Detection for iOS

Wake word detection on iOS listens continuously for a specific keyword and returns a detection event that activates the iOS app. Apple reserves the system wake word layer for Siri, and iOS provides no API for registering a custom wake word at the OS level. Third-party apps must implement always-on wake word detection themselves using a background AVAudioSession.

Wake Word Detection Options for iOS: Porcupine Wake Word, custom Core ML models

See the complete guide to wake word detection and how to benchmark wake word engines before choosing one.

Voice Activity Detection for iOS

Voice Activity Detection (VAD) on iOS determines whether a frame of audio contains human speech, without transcribing what was said. iOS 26's SpeechAnalyzer includes a SpeechDetector module that detects speech presence, but is not a standalone VAD engine. For pipeline-level gating, activating downstream engines only when speech is present, a dedicated VAD engine remains the practical choice across the installed base.

VAD Options for iOS: Cobra Voice Activity Detection, WebRTC VAD (requires C bridge integration)

Intent Recognition for iOS

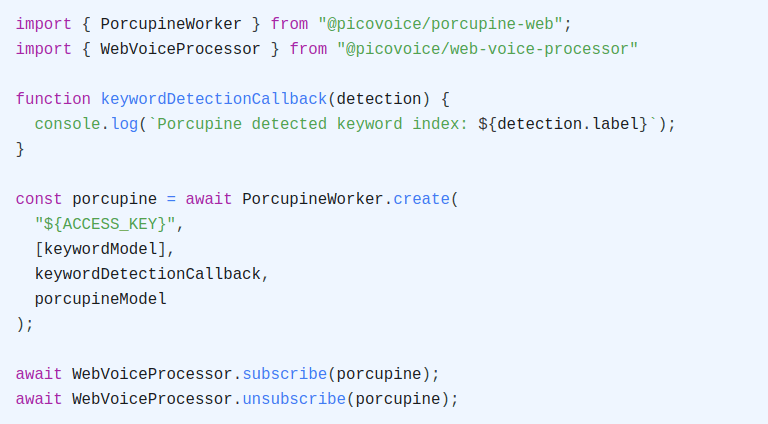

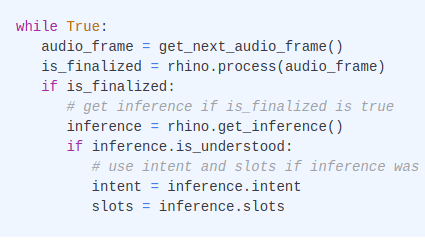

Intent recognition on iOS maps a spoken utterance directly to a structured intent and slot values. Neither SFSpeechRecognizer nor SpeechAnalyzer support intent recognition, and both return raw text. Extracting intent from that text requires a separate natural language understanding call, adding a second pipeline stage and compounding error rates. Speech-to-intent engines like Rhino Speech-to-Intent infer intent directly from audio in a single pass, bypassing the automatic speech recognition + natural language understanding two-stage pipeline entirely.

Speech-to-Intent Options for iOS: Rhino Speech-to-Intent, Amazon Lex (via REST API), Google Dialogflow (via REST API)

Compare voice command acceptance rates for Google Dialogflow, Amazon Lex, and Rhino Speech-to-Intent, and see the differences between conventional and end-to-end spoken language understanding.

Streaming Speech-to-Text for iOS

Streaming speech-to-text returns partial transcripts as the user speaks rather than waiting for a complete recording, powering live captions, voice input fields, and real-time dictation on iOS. SFSpeechRecognizer supports partial results but caps sessions at one minute, and SpeechAnalyzer on iOS 26 removes this limit but is not available on earlier releases.

Streaming STT Options for iOS: Cheetah Streaming Speech-to-Text, WhisperKit, Amazon Transcribe Streaming (via HTTP/2), Azure Real-Time STT, Google Speech-to-Text Streaming (via REST API)

Evaluate streaming STT engines on Word Accuracy, Punctuation Accuracy, and Word Emission Latency before choosing one.

Batch Transcription for iOS

Batch transcription on iOS processes a complete audio file after recording ends, returning a full transcript with word-level timestamps. SFSpeechRecognizer supports file transcription via SFSpeechURLRecognitionRequest, but the one-minute limit applies to files too, which rules it out for typical voice memos and meeting recordings.

Batch STT Options for iOS: Leopard Speech-to-Text, WhisperKit, Amazon Transcribe, Google Speech-to-Text (via REST API), Azure Speech

Compare batch STT accuracy across major engines on standardized datasets.

Strengths and Limitations of iOS for Voice Applications

Advantages of Building iOS Voice Applications

- Consistent hardware baseline:

iOSruns on a controlled set of Apple hardware. Microphone quality, audio pipeline behavior, and Neural Engine availability are predictable across the device population. Developers can test on a representative set of devices and expect consistent behavior in production. - Neural Engine access: Every iPhone since the iPhone 8 includes Apple's Neural Engine, purpose-built for on-device ML inference. On-device voice AI models that leverage Core ML and the Neural Engine run with significantly lower power consumption and latency than CPU-only alternatives.

- AVAudioSession control:

iOSgives developers fine-grained control over the audio session category, mode, and routing throughAVAudioSession. This makes it possible to configure audio capture precisely for voice recognition, enabling noise reduction, gain control, and correct routing to the expected microphone source. - Swift Package Manager and CocoaPods: Mature dependency management makes integrating third-party voice AI SDKs straightforward. All Picovoice

iOSSDKs are distributed via both SPM and CocoaPods.

Challenges of Building iOS Voice Applications

- Background audio restrictions:

iOSdoes not allow arbitrary background microphone access. Apps that need always-on listening must declare theaudiobackground mode inInfo.plistand keep an activeAVAudioSessionrunning. This approach requires careful management of audio session interruptions because phone calls, Siri activation, and other audio events can preempt the session. - No system-level custom wake words: Apple reserves the system wake word layer for Siri. Third-party apps cannot register custom wake words at the OS level. Implementing a custom wake word requires keeping an

AVAudioSessionactive in the background with theaudiobackground mode enabled, which triggers the red microphone indicator in the status bar — a signal visible to users that recording is active. - Two permission requirements: Unlike

Android, which requires onlyRECORD_AUDIO,iOSvoice apps require two separate user permission prompts �—NSMicrophoneUsageDescriptionfor microphone access andNSSpeechRecognitionUsageDescriptionfor speech recognition — if using Apple's Speech framework.

iOS Speech Recognition Libraries and APIs in 2026

iOS developers have multiple speech recognition options across native Apple APIs, cloud SDKs, and on-device third-party platforms.

Native iOS Speech Recognition APIs

SFSpeechRecognizer is Apple's speech recognition API, available since

iOS10. It wraps Apple's on-device and cloud speech recognition models and supports both microphone input (SFSpeechAudioBufferRecognitionRequest) and audio file input (SFSpeechURLRecognitionRequest). SettingrequiresOnDeviceRecognition = trueforces local inference and avoids sending audio to Apple's servers, though the on-device model requires initial download and may not be available immediately after installation. Per Apple's documentation, the API imposes a limit of one minute of audio per recognition request and a rate limit of 1,000 requests per device per hour, and does not support custom vocabulary training, custom wake words, intent recognition, or standalone voice activity detection. ThecontextualStringsproperty allows limited phrase biasing (up to ~100 short phrases) to improve recognition of domain-specific terms, but this is not a substitute for a full custom vocabulary workflow. Starting iniOS16,SFSpeechRecognitionRequestsupportsaddsPunctuation = truefor automatic punctuation insertion.SpeechAnalyzer is Apple's modular speech analysis API, introduced in

iOS26. It provides a concurrency-friendly Swift API built aroundAsyncSequenceand is positioned as the successor toSFSpeechRecognizerfor long-form, on-device transcription. The API is organized around modules that attach to an analysis session. As ofiOS26, the public modules includeSpeechTranscriberfor speech-to-text transcription andSpeechDetectorfor detecting speech presence in an audio stream. Both run fully on-device, with language assets downloaded and managed through the system asset catalog.SpeechAnalyzeris available oniOS26 and later, and is not backward compatible with earlieriOSversions.

Cloud-Based iOS Speech Recognition Options

Amazon Transcribe is accessible on

iOSvia the AWS SDK for Swift or direct HTTP/2 streaming. There is no purpose-built nativeiOSSDK, so integration requires implementing streaming or batch workflows against AWS APIs directly. Batch transcription involves uploading audio to Amazon S3 and polling for results.Google Cloud Speech-to-Text is accessible on

iOSvia the REST API usingURLSessionfor synchronous and asynchronous (batch) recognition. Streaming recognition is only available over gRPC, for which Google provides iOS Swift samples using thegoogleapisCocoaPods rather than a first-class native Swift client library. Audio capture and authentication must be implemented separately in either case.Microsoft Azure Speech SDK provides an official

iOSframework (MicrosoftCognitiveServicesSpeech), distributed as an xcframework bundle via CocoaPods or direct download. It supports real-time speech-to-text, batch transcription, and custom keyword recognition trained via Azure Speech Studio. Keyword detection runs on-device, while speech-to-text processing occurs in the cloud.Amazon Lex V2 is accessible on

iOSvia the AWS SDK for Swift (LexRuntimeV2Client) or direct REST API calls. Note that the older AWS Mobile SDK for iOS (AWSLexCocoaPods) only supports Lex V1, which AWS discontinued in September 2025. Lex V2 supports intent recognition and slot extraction from text or audio input, and does not include audio capture utilities built foriOS.Google Dialogflow is accessible on

iOSvia the REST API. The official Google Cloud client library does not supportiOSnatively, and audio capture and streaming must be implemented separately.

On-Device iOS Speech Recognition Options

WhisperKit is Argmax's open-source Swift package that runs OpenAI's Whisper speech-to-text models on Apple Silicon via Core ML. It is distributed via Swift Package Manager and supports streaming transcription, word-level timestamps, voice activity detection, and multiple Whisper model sizes. WhisperKit does not include wake word detection or intent recognition.

whisper.cpp is an open-source C/C++ port of OpenAI's Whisper model with an official

iOSXCFramework. It supports both streaming and batch transcription with Core ML acceleration on Apple Silicon. Integration requires manual Swift bridging to the C API.WebRTC VAD is Google's open-source voice activity detection library. It is implemented in C and has no official

iOSSDK, so integration typically requires manual Swift bridging or third-party wrappers. It uses a Gaussian Mixture Model (GMM) rather than deep learning.Vosk is an open-source speech recognition toolkit with limited official

iOSsupport (thevosk-apirepo includes a baseline iOS directory, but the prebuilt library is available on request rather than as a packaged SDK). It supports 20+ languages and offline streaming transcription. Wake word detection and intent recognition are not supported. Integration oniOSrequires more manual setup than commercial alternatives.Picovoice is the only widely available on-device voice AI platform with official

iOSsupport across wake word detection, voice activity detection, intent recognition, streaming speech-to-text, and batch transcription. All engines ship as official Swift packages and CocoaPods, process audio entirely on-device for private, low-latency speech recognition, and are compatible withiOS16.0+. Wake word, voice command, and speech-to-text models can be customized via Picovoice Console without any ML expertise.- Porcupine Wake Word: Custom wake word detection

- Cobra Voice Activity Detection: Voice activity detection

- Rhino Speech-to-Intent: Voice command recognition

- Cheetah Streaming Speech-to-Text: Real-time transcription

- Leopard Speech-to-Text: Batch transcription

The rest of this guide covers how to integrate each Picovoice engine into an iOS app using Swift, starting with project setup.

iOS Project Setup for Speech Recognition

Before integrating any Picovoice engine, ensure the project meets the following requirements:

iOS16.0+- Xcode 15+

- A Picovoice

AccessKey— sign up for a Free Trial via Picovoice Console to get one

Adding Dependencies

All Picovoice iOS SDKs are available via Swift Package Manager (SPM) and CocoaPods. To add via SPM, open your project's Package Dependencies in Xcode and add the relevant package URL. To add via CocoaPods, add the pod to your Podfile and run pod install.

For real-time engines that capture microphone audio, add the iOS Voice Processor package, which handles AVAudioSession configuration and delivers PCM frames at the correct sample rate:

SPM:

CocoaPods:

Configuring Permissions

Add the following entries to your app's Info.plist. The microphone permission is required by all real-time Picovoice engines. The speech recognition permission is only required if you are also using Apple's SFSpeechRecognizer or SpeechAnalyzer APIs.

At runtime, request microphone access before starting any engine:

iOS Architectural Decisions for Voice AI

Before writing engine code, let's look at three architectural decisions that shape how voice AI behaves on iOS. These are specific to iOS's audio model and apply regardless of which voice AI engine the app uses.

1. Foreground vs Background Audio

Voice AI on iOS runs in one of two lifecycle contexts, and the choice determines whether the engine can listen while the app is in the background.

Foreground-only voice AI runs while the app's UI is active.

AVAudioSessionstarts when the user opens a voice feature and stops when they navigate away. This is the correct choice for dictation fields, voice memo recorders, in-app voice search, and any feature that only needs to listen while the user is actively engaged. Implementation is simpler, battery impact is bounded to active use, and no special entitlements are required.Background voice AI requires declaring

audioas a background mode inInfo.plistunderUIBackgroundModes. This keepsAVAudioSessionactive when the app moves to the background and allows continuous microphone capture.iOSdisplays a red microphone indicator in the status bar whenever background audio capture is active. This is a system-level privacy indicator that cannot be hidden or suppressed. It is the required approach for always-on wake word detection and other continuously listening features. Apps using background audio may receive additional scrutiny during App Store review and must provide a clear usage justification.

Many apps combine both: a background AVAudioSession runs Wake Word engine continuously, and the app's UI takes over for streaming transcription once the wake word fires.

2. AVAudioSession Configuration

AVAudioSession is a singleton that mediates all audio activity on the device. Configuring it correctly for voice recognition avoids conflicts with other audio consumers e.g., music playback, phone calls, Siri etc. and ensures the microphone input reaches the engine in the correct format.

For voice recognition, the recommended session configuration is:

Using .playAndRecord with .default mode keeps the input audio unprocessed, which is what on-device voice recognition engines expect. For apps with simultaneous audio playback that need echo cancellation, call setPrefersEchoCancelledInput(true) on iOS 18+. Handle AVAudioSession interruption notifications. Phone calls, Siri, and other audio apps can preempt the session and require the engine to pause and resume.

3. Permission Request Timing

iOS requires runtime permission for microphone access, and iOS 17+ introduced stricter enforcement of usage description requirements. The timing of the permission request significantly affects grant rates.

Request at point of use — when the user first taps a voice feature is the highest-converting pattern for most apps. The user has clear context for why the app wants microphone access, which improves the likelihood of granting permission.

Request at app launch guarantees the permission is available before any feature needs it, but increases denial rates because the user has no context for the request.

Regardless of timing, the app must handle the denied state gracefully. Never assume microphone permission is granted across sessions. Check AVAudioSession.sharedInstance().recordPermission before starting any engine, and present a clear rationale before directing the user to Settings if permission has been denied.

Implement On-device Speech Recognition on iOS

Adding Voice Activity Detection to iOS Apps

Best for: Gating audio pipelines, filtering silence before passing audio to downstream engines, reducing battery usage from continuous audio capture

Why developers choose it: On iOS, keeping AVAudioSession active continuously while routing audio to multiple engines wastes compute and battery during silence. Cobra VAD gates downstream processing so wake word, STT, or intent engines only receive audio frames when speech is present. Because Cobra is part of the same Picovoice voice AI stack that includes Porcupine Wake Word, Rhino Speech-to-Intent, Cheetah Streaming Speech-to-Text, and Leopard Speech-to-Text, a single VoiceProcessor instance can feed Cobra Voice Activity Detection and any downstream engine without re-sampling or opening multiple audio sessions. Cobra VAD processes audio in 0.000399 real-time factor in C and 0.00171 in Python.

- Add the Cobra Voice Activity Detection dependency via SPM:

Or via CocoaPods:

- Import Cobra Voice Activity Detection and create an instance of the VAD engine:

- Pass in audio frames to the

.processfunction:

Full Code for iOS Voice Activity Detection

For further details, visit the Cobra Voice Activity Detection product page or refer to Cobra's iOS SDK quick start guide.

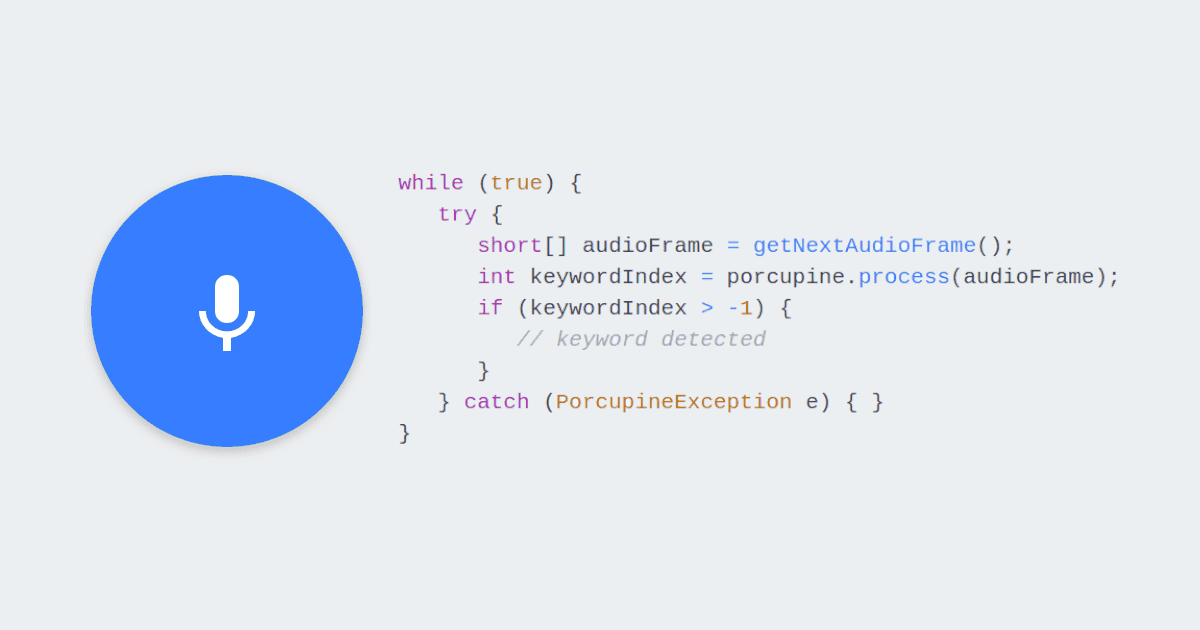

Adding Wake Word Detection to iOS Apps

Best for: Always-on listening, hands-free app activation, keyword spotting, replacing push-to-talk in field and enterprise iOS apps

Why developers choose it: iOS does not allow third-party apps to register custom wake words at the OS level, and that layer is reserved for Siri. Implementing always-on wake word detection requires keeping a background AVAudioSession active with the audio background mode declared. Porcupine Wake Word is designed for this constraint, with a runtime footprint small enough to listen continuously on iPhone hardware without significant battery impact. Porcupine achieves 97.1% accuracy at 1 false alarm per 10 hours with noise and background speech.

- Add the Porcupine Wake Word dependency via SPM:

Or via CocoaPods:

- Train a custom wake word model using Picovoice Console, download the

.ppnfile, and add it to the app as a bundled resource (Build Phases > Copy Bundle Resources). Then get its path from the bundle:

- Create an instance of

PorcupineManagerthat detects the custom keyword:

- Start and stop listening:

Full Code for iOS Wake Word Detection

PorcupineManager handles AVAudioSession configuration and audio capture internally. For custom audio pipelines, use the low-level Porcupine class directly.

For further details, visit the Porcupine Wake Word product page or refer to Porcupine's iOS SDK quick start guide.

Adding Intent Recognition to iOS Apps

Best for: iOS apps with a defined command vocabulary — smart home controllers, in-car interfaces, accessibility apps, voice navigation

Why developers choose it: Rhino Speech-to-Intent infers intent and slot values directly from audio in a single pass, eliminating the two-stage STT + NLU pipeline. Rhino achieves 97% accuracy in noisy environments, outperforming Amazon Lex and Google Dialogflow on command acceptance rate.

- Add the Rhino Speech-to-Intent dependency via SPM:

Or via CocoaPods:

- Train a custom context model using Picovoice Console, download the

.rhnfile, and add it to the app as a bundled resource. Get its path from the bundle:

- Create an instance of

RhinoManager:

- Start listening for a command:

RhinoManager automatically stops audio capture after an inference is returned. Call .process() again to listen for the next command.

Full Code for iOS Intent Recognition

For further details, visit the Rhino Speech-to-Intent product page or refer to Rhino's iOS SDK quick start guide.

Adding Real-Time Transcription to iOS Apps

Best for: Live captions, voice input fields, iOS voice assistants, agentic AI apps that respond to spoken input in real time

Why developers choose it: Cheetah Streaming Speech-to-Text runs entirely on-device with no session limits, making it suitable for continuous transcription beyond what Apple's native APIs support across the installed base. Cheetah achieves a 10.1% word error rate and 16.1% punctuation error rate in English, beating Google Streaming Speech-to-Text (11.9% WER, 36.0% PER) while running entirely on-device.

- Add the Cheetah Streaming Speech-to-Text dependency via SPM:

Or via CocoaPods:

- Download the

.pvlanguage model file from the Cheetah GitHub repository and add it to the app as a bundled resource. Get its path from the bundle:

- Create an instance of Cheetah:

- Transcribe speech in real time:

Full Code for iOS Real-Time Transcription

For further details, visit the Cheetah Streaming Speech-to-Text product page or refer to Cheetah's iOS SDK quick start guide.

Adding Batch Transcription to iOS Apps

Best for: Transcribing audio recordings stored on the device — voice memos, recorded calls, field inspection audio, meeting recordings

Why developers choose it: SFSpeechRecognizer's one-minute limit applies to file input as well as microphone input, making it unsuitable for transcribing voice memos, recorded calls, or meeting audio without manually chunking the file. Leopard Speech-to-Text processes files without any timing restrictions, entirely on-device. Leopard achieves a 9.7% Word Error Rate while requiring only 2.6 core hours to process 100 hours of audio, making it practical on iPhone hardware.

- Add the Leopard Speech-to-Text dependency via SPM:

Or via CocoaPods:

- Download the

.pvlanguage model file from the Leopard GitHub repository and add it to the app as a bundled resource. Get its path from the bundle:

- Create an instance of Leopard:

- Transcribe an audio file:

Full Code for iOS Batch Transcription

For further details, visit the Leopard Speech-to-Text product page or refer to Leopard's iOS SDK quick start guide.

Building a Complete Voice Assistant for iOS

With all ios speech recognition components in place, they can be combined into a complete voice pipeline:

Example interaction: "Hey assistant, send an email to Sarah" → "Hi Sarah, wanted to check in on the Q3 report. Let me know when you have a few minutes to chat."

- Porcupine Wake Word detects "Hey assistant" in the background

- Cobra VAD confirms speech is present before activating downstream engines

- Rhino Speech-to-Intent interprets "send an email to Sarah" and returns

intent: send_emailwithrecipient: Sarah - Cheetah Streaming Speech-to-Text transcribes the dictated email body as the user speaks it

The pipeline runs entirely on-device for privacy-first, low-latency speech recognition. No audio leaves the app, no internet connection is required, and response latency stays consistent regardless of network conditions. All four engines share the same 16kHz, 16-bit PCM audio format, so one VoiceProcessor instance feeds the entire chain without re-sampling or opening multiple audio sessions.

Common Issues and Solutions

AVAudioSession Interruptions

iOS can preempt the AVAudioSession during phone calls, Siri activation, or other audio events. Register for interruption notifications and handle them explicitly:

Model File Not Found

Xcode may exclude .ppn, .rhn, and .pv files from the app bundle if they are not added to the Copy Bundle Resources build phase. Verify that all model files appear under Build Phases > Copy Bundle Resources in Xcode. Confirm the file name and extension passed to Bundle.main.path(forResource:ofType:) match exactly.

Too Many False Activations or Missed Detections

Porcupine Wake Word and Rhino Speech-to-Intent both expose a sensitivity parameter between 0 and 1. A higher value reduces missed detections at the cost of more false activations. Tune sensitivity in your target deployment environment — a sensitivity calibrated for a quiet office will behave differently in a factory or outdoor setting.

Poor Transcription Accuracy for Domain-Specific Terms

Cheetah Streaming Speech-to-Text and Leopard Speech-to-Text support custom vocabulary for domain-specific terms via Picovoice Console. Add medical, legal, industrial, or branded vocabulary without any ML expertise. All Picovoice engines require 16kHz mono PCM audio. Use the iOS Voice Processor to capture audio at the correct sample rate.

Background Listening Stopped After Screen Lock

If wake word detection stops when the screen locks, the app is being suspended despite having the audio background mode declared. Ensure AVAudioSession is set to active and the category is set to .playAndRecord or .record before the app moves to the background. Test on a physical device — the iOS Simulator does not replicate background execution behavior accurately.

AccessKey Errors

Confirm the AccessKey is valid in Picovoice Console. Call .delete() on all engine instances when done to free native resources. Failing to release resources can cause initialization errors on subsequent calls.

Preparing an iOS Voice App for Production

Before shipping a voice-enabled iOS app, validate performance and reliability against real deployment conditions.

Engine Lifecycle

- Call

.delete()on all engine instances when done. Picovoice engines allocate native resources that are not released by Swift's ARC automatically - Stop engines when continuous listening is not required to reduce battery consumption

- Initialize engines once and reuse them across sessions rather than creating and destroying instances

Permissions

- Request

NSMicrophoneUsageDescriptionat the point of use with a clear rationale - Handle the denied permission state gracefully — never assume a previously granted permission persists

- If using

SFSpeechRecognizerorSpeechAnalyzer, also requestNSSpeechRecognitionUsageDescription

Accuracy and Environment Testing

- Test in acoustic conditions representative of your deployment environment — microphone distance, background noise type, and room acoustics all affect accuracy

- Use Picovoice's open-source benchmarks for data-driven engine selection

- Tune sensitivity on Porcupine Wake Word and Rhino Speech-to-Intent to match your deployment environment

Background and Battery

- Test always-on listening on physical devices at representative battery levels — Simulator does not replicate power management behavior

- Monitor battery consumption during typical session lengths on older supported hardware

- Ensure the

audiobackground mode is declared if wake word detection needs to run while the app is in the background

Audio Quality

- Test with the device's built-in microphone as well as external microphones and AirPods that your users may connect

- For input-only voice recognition apps, use

.playAndRecordwith.defaultmode. For apps with simultaneous audio playback, callsetPrefersEchoCancelledInput(true)on iOS 18+ to reduce echo from the speaker - Test audio session interruption handling — phone calls and Siri activations occur unpredictably in production

Resources

Documentation

- Cobra VAD iOS Quick Start

- Cobra VAD iOS API Documentation

- Porcupine Wake Word iOS Quick Start

- Porcupine Wake Word iOS API Documentation

- Rhino Speech-to-Intent iOS Quick Start

- Rhino Speech-to-Intent iOS API Documentation

- Cheetah Streaming Speech-to-Text iOS Quick Start

- Cheetah Streaming Speech-to-Text iOS API Documentation

- Leopard Speech-to-Text iOS Quick Start

- Leopard Speech-to-Text iOS API Documentation

- iOS Voice Processor Quick Start

Demos

- Official iOS Wake Word Demo

- Official iOS Speech-to-Intent Demo

- Official iOS Streaming Speech-to-Text Demo

- Official iOS Speech-to-Text Demo

- Official iOS Voice Activity Detection Demo

Conclusion

Building voice features into an iOS app means navigating AVAudioSession interruption handling, background execution restrictions, Apple's permission model, and the gap between what Apple's native speech APIs support and what production voice apps require. The right foundation makes the difference between a voice experience that works reliably in the field and one that breaks.

Key takeaways:

- Wake word detection, voice activity detection, speech-to-intent, streaming speech-to-text, and batch speech-to-text each solve a distinct problem — using the wrong one adds unnecessary latency, battery drain, or complexity

- Apple's native APIs cover basic dictation but fall short for wake word detection, long-form transcription, intent recognition, and any production pipeline that needs to run in the background reliably

- On-device processing keeps audio local, eliminates network latency, and keeps the app working when the network does not

- Custom wake word, voice command, and speech-to-text models can be trained via Picovoice Console without any ML expertise

Request a Free Trial to start building. No credit card required.

Request Free Trial