TLDR: Build a voice-powered inspection form for the web with hands-free voice form filling and voice data entry. Structured voice commands map directly to form fields without an LLM, and all processing runs locally in the browser via WebAssembly. Adapt this template for inspection reporting, safety audits, maintenance logs, and insurance documentation.

Hands-Free Voice Data Entry for Inspections and Field Reporting

Inspection and field reporting workflows often require capturing structured data while hands are busy, gloved, or focused on equipment. To reduce this friction, users can fill forms by voice, setting dropdowns, toggling checklist items, and dictating notes in real time.

This guide shows how to build a voice-powered inspection form for the web that combines:

- Voice activity detection to automatically activate voice input when speech is detected

- Spoken language understanding for deterministic voice data entry without routing commands through an LLM pipeline

- Keyword detection for form actions like starting notes, clearing form, and submitting form

- Streaming speech-to-text for free-form voice notes

All speech processing runs locally in the browser using WebAssembly, so microphone audio does not need to be streamed to a cloud speech API. This eliminates network latency for real-time voice input and helps keep voice data private and GDPR/CCPA compliant in regulated environments.

What You'll Build

As a working example, this tutorial builds a roof inspection reporting form that can be completed entirely by voice. The resulting interface supports:

- Hands-free voice data entry activated automatically by voice activity detection

- Multi-checkbox toggling from a single voice command

- Keyword-triggered actions for starting notes, clearing the form, and submitting the form

- Real-time dictation for free-form voice notes

- Full inspection completion without any keyboard interaction

This same architecture applies to field inspections, equipment maintenance logs, insurance claims, safety audits, healthcare intake workflows, and any form with structured fields and free-form notes.

What You'll Need

- Node.js (download page)

- Picovoice

AccessKeyfrom the Picovoice Console - A microphone-equipped device for testing (laptop or desktop)

How to Fill Web Forms by Voice without an LLM

For inspection reporting, the goal is simple: user speech should map to the correct form field, and notes should stay open-ended. Many voice-enabled forms take a speech-to-text first approach, then use natural language understanding (NLU) or a large language model (LLM) to map the transcript into dropdown values, checkboxes, and select fields. This pipeline adds extra steps (transcription + parsing), which increase latency and create avoidable edge cases like misheard values, invalid dropdown options, or routing a value to the wrong field.

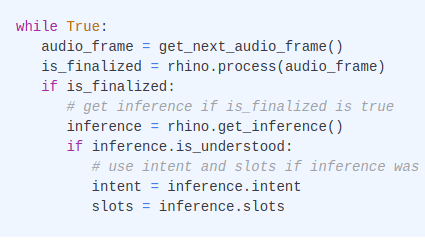

For voice form filling with known fields and fixed option values, an LLM is unnecessary overhead. A cleaner pattern is speech-to-intent, which extracts intent and slot values directly from audio without any intermediate text:

Since valid commands and field values are defined in advance, results are deterministic — the same command always produces the same structured output. This tutorial uses speech-to-intent for all structured fields (dropdowns, checkboxes) and reserves streaming speech-to-text for free-form notes, where open-ended input is expected. To go further, a local LLM like picoLLM can process the captured notes for summarization, report generation, and more advanced features.

Voice Inspection Form System Architecture

The application uses four specialized voice engines working together through a shared audio stream:

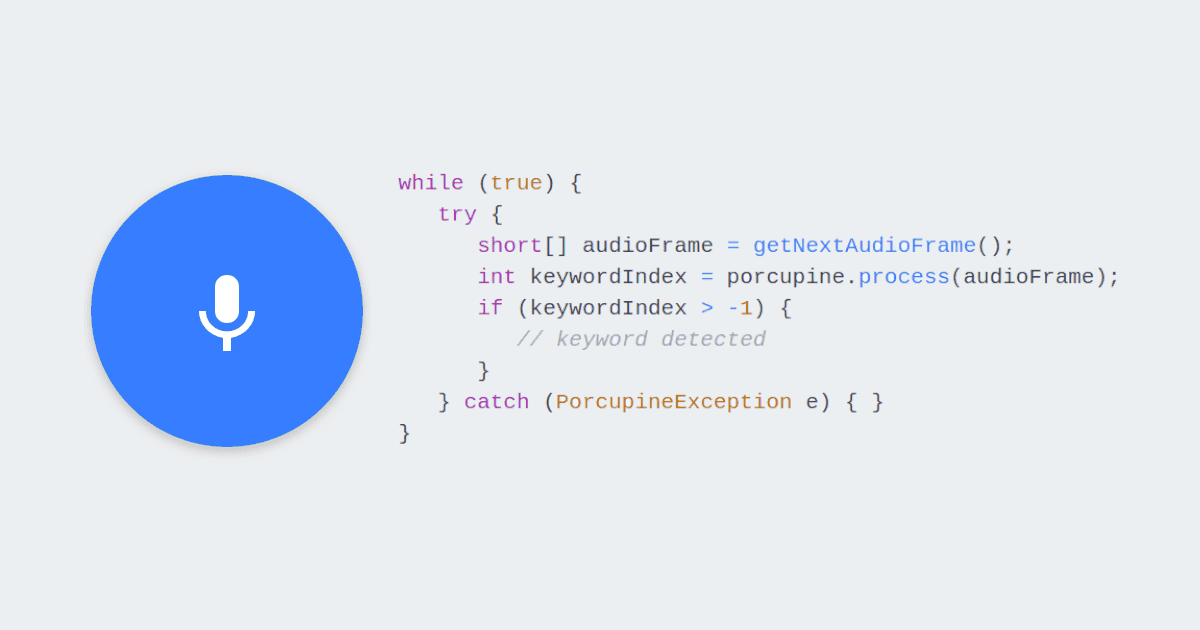

Cobra Voice Activity Detection: Monitors the microphone continuously and activates spoken language understanding or speech-to-intent when voice probability exceeds a threshold.

Rhino Speech-to-Intent: Activates when Cobra detects speech, outputting intent and slot values directly from user audio, achieving 97%+ accuracy in noisy, real-world environments.

Porcupine Wake Word: Listens continuously for action keywords e.g., "Start Notes", "Clear Form", and "Submit Form" to trigger the corresponding form action when detected.

Cheetah Streaming Speech-to-Text: Transcribes open-ended notes in real time, appending words to the notes field as the inspector speaks.

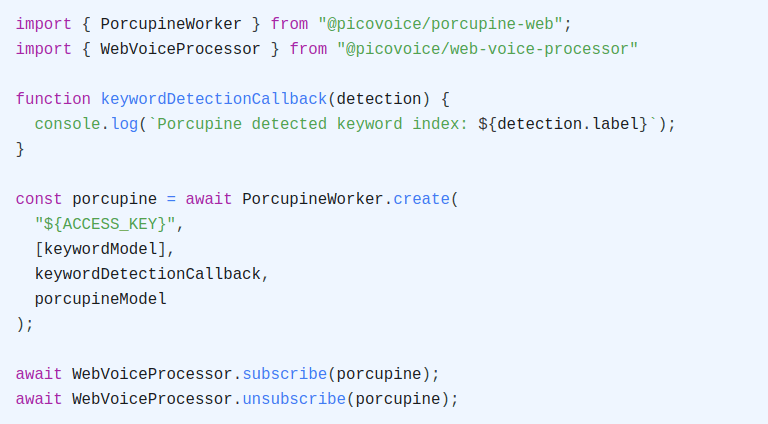

All four voice engines share a single microphone stream through Web Voice Processor.

Set Up the Web Voice Form Project

Initialize a new project and install the required packages:

Install the speech SDKs and a local development server:

@picovoice/cobra-web: Cobra Voice Activity Detection SDK@picovoice/porcupine-web: Porcupine Wake Word detection SDK@picovoice/rhino-web: Rhino Speech-to-Intent SDK@picovoice/cheetah-web: Cheetah Streaming Speech-to-Text SDK@picovoice/web-voice-processor: Voice Processor SDK for microphone audiohttp-server: Local server for testing

Train Custom Wake Words for Voice Form

Porcupine Wake Word detects three action keywords that control the form: "Start Notes", "Clear Form", and "Submit Form". Each keyword is trained as a separate .ppn model.

- Sign up for a Picovoice Console account and navigate to the Porcupine page.

- Enter your keyword such as "Start Notes" and test it using the microphone button.

- Click "Train", select "Web (WASM)" as the target platform, and download the

.ppnmodel file in the project root. - Repeat steps 2 & 3 for additional keywords:

- "Clear Form"

- "Submit Form"

For tips on designing effective keywords, review the choosing a wake word guide.

Define Voice Commands for the Inspection Form

Rhino Speech-to-Intent needs a context that maps spoken phrases to intents and slot values. Unlike an LLM prompt, this context is deterministic: every phrase you define will always produce the same structured output.

- In the Rhino section of Picovoice Console, create a new context for your voice form.

- Click the "Import YAML" button in the top-right corner of the Console. Paste the YAML provided below to add the inspection form voice commands.

- Train the context for the "Web (WASM)" platform and download the

.rhnmodel file. - Download the Rhino default model (

rhino_params.pv) and place both files in the project root.

Train Custom Voice Commands to Fill Web Form using YAML Context:

This speech-to-intent context defines five intents:

- Four dropdown intents (

setInspectionType,setPriority,setRoofType,setCondition) map voice commands directly to form dropdown values. - One checkbox intent (

toggleDamage) toggles damage checkboxes. It supports multiple damage types in a single utterance using separate slot names and slot types (damageType,damageType2,damageType3), so saying "I see water damage and cracks" checks both boxes at once.

The bracket syntax handles natural phrasing variations — "set priority to high", "mark it as high", "high priority", and "priority is high" all resolve to the same intent with the same slot value. You define the vocabulary once, and the voice model handles the matching deterministically. To support additional phrasing, add more expressions to the YAML.

Refer to the Rhino Syntax Cheat Sheet for details on expression syntax, optional words, and slot types.

Download the Streaming Speech-to-Text Model

Cheetah Streaming Speech-to-Text requires a default language model file. Download cheetah_params.pv from the Cheetah repository and place it in the project root.

Create the Inspection Form HTML

Create an index.html file in the project root. The application loads all SDKs from node_modules:

The complete HTML and CSS for the form UI is included in the full code at the end of this tutorial.

Add Voice Activity Detection for Automatic Voice Activation

Cobra Voice Activity Detection runs continuously and detects when someone is speaking. When the voice probability crosses a threshold, the system activates Rhino Speech-to-Intent to listen for a command:

The callback monitors voice probability and activates Rhino when it detects speech:

The VAD_THRESHOLD and VAD_FRAMES_REQUIRED values prevent false activations from background noise. A threshold of 0.5 in a frame of detected speech provides a responsive activation trigger. The keywordCooldownUntil check prevents Cobra from immediately reactivating Rhino after a Porcupine keyword is detected.

Add Keyword Detection for Form Actions

Porcupine Wake Word listens for three action keywords continuously alongside Cobra Voice Activity Detection. When a keyword is detected, it triggers the corresponding form action.

A short cooldown prevents Cobra from immediately reactivating Rhino after a keyword is detected:

When a keyword is detected while Rhino is active, the callback unsubscribes Rhino first to avoid conflicting inferences.

Strip Keywords from Dictated Notes

Since Porcupine Wake Word and Cheetah Streaming Speech-to-Text run simultaneously during dictation, Cheetah may transcribe the keyword phrase (e.g., "submit form") before Porcupine detects it. The cleanKeywordFromNotes function strips these keyword phrases from the end of the notes field:

Fill Form Fields with Voice Commands

When Cobra Voice Activity Detection detects speech, Rhino Speech-to-Intent activates and listens for a voice command. The endpointDurationSec is set to 0.5 seconds for fast responses after the user finishes speaking.

Start Rhino when Cobra detects voice activity, and stop after the inference is finalized:

The handleIntent function routes each intent to the correct form field. The toggleDamage intent iterates over all damage-related slot values and toggles each one:

For example, saying "I see water damage and cracks" activates Rhino Speech-to-Intent via Cobra Voice Activity Detection, which toggles both checkboxes at once and returns:

{ intent: "toggleDamage", slots: { damageType: "water damage", damageType2: "cracks" } }

Saying "set priority to urgent" updates the priority dropdown and returns:

{ intent: "setPriority", slots: { priority: "urgent" } }

Add Real-Time Speech-to-Text for Voice Notes

Cheetah Streaming Speech-to-Text handles free-form voice notes. It requires a default language model file:

The callback appends transcribed text to the notes field in real time:

Dictation is controlled with explicit start and stop functions. When stopping, cheetah.flush() is called to capture any remaining buffered audio:

The inspector starts notes by saying "Start Notes". Saying "Clear Form" or "Submit Form" while notes are active automatically stops dictation first and cleans any keyword text from the notes, since Porcupine keywords are always listening.

Complete Code Example: Voice Inspection Form

Here is the complete index.html with all HTML, CSS, and JavaScript:

Configure Access Key and Model Files

Open index.html and replace the following placeholders in the <script> block:

${YOUR_ACCESS_KEY_HERE}: YourAccessKeyfrom the Picovoice Console main dashboard.${START_NOTES_PPN}: Filename of your trained "Start Notes".ppnmodel (e.g.,start-notes).${CLEAR_FORM_PPN}: Filename of your trained "Clear Form".ppnmodel (e.g.,clear-form).${SUBMIT_FORM_PPN}: Filename of your trained "Submit Form".ppnmodel (e.g.,submit-form).${CONTEXT_FILE_NAME}: Filename of your trained Rhino.rhncontext (e.g.,inspection).

Run the Voice-Powered Inspection Form

Your project directory should now contain:

Start the local server with the required cross-origin headers:

Open http://localhost:5000 in your browser. The voice engines initialize automatically on page load.

Voice-Powered Inspection & Reporting: Alternative Use-Cases

The voice AI pipeline of voice activity detection, speech-to-intent, keyword detection, and streaming speech-to-text supports voice form filling and voice data entry for inspection reporting and any workflow with structured fields and free-form notes. To adapt for a different use case, update the Rhino Speech-to-Intent context with your intents and slot values, change the form fields to match, and update the handleIntent function:

- Insurance claims: Adjusters speak damage categories, severity levels, and coverage types on site

- Construction punch lists: Workers call out defect types, locations, and priority while walking a job site

- Equipment maintenance: Technicians log equipment IDs, fault codes, and service actions while testing machinery

- Safety audits: Inspectors complete compliance checklists by voice while keeping hands free for measurements and tools

- Healthcare intake: Clinicians select symptom categories and severity via voice while evaluating patients